The promise of seamless artificial intelligence often shatters against the cold reality of server racks and the complex networking code required to keep a single digital assistant from crashing under its own weight. While the intelligence of large language models has reached a point where they can simulate professional reasoning, the actual labor of deploying them remains a logistical nightmare for enterprise IT departments. Organizations find themselves trapped in a cycle of building and rebuilding the plumbing—the secure sandboxes and state management systems—rather than actually solving business problems. Anthropic’s introduction of Claude Managed Agents marks a definitive pivot toward invisible infrastructure, where the focus shifts from the mechanics of execution back to the quality of the work being performed.

The End of the Infrastructure Marathon for Enterprise AI

The traditional journey toward deploying an autonomous agent has long felt like a marathon where the finish line keeps receding. Developers typically spend months constructing the underlying architecture needed to handle persistent sessions and secure tool use before a single task can be completed. This manual labor involves managing complex coordination layers that ensure an agent can remember past interactions without compromising security or data integrity. By automating these foundational layers, the new managed agent framework allows enterprises to bypass the “plumbing” phase entirely.

This evolution signifies more than just a convenience; it represents a fundamental change in how we perceive the role of a model provider. Instead of merely handing over an API key and leaving the implementation to the client, the provider now offers a fully realized execution environment. This shift enables teams to deploy production-grade agents in days rather than quarters. When the infrastructure becomes a background service, the internal talent of a company is finally freed to focus on refining the behavioral logic and safety guardrails that define a successful digital worker.

Why the Execution Bottleneck Is the New GPU Shortage

During the early stages of the AI boom, the primary concern was whether a company could secure enough raw compute power to run a specific model. Today, a more subtle and damaging bottleneck has appeared: the difficulty of operational execution at scale within the data center. As workloads move away from simple, one-off prompts and toward long-running, autonomous workflows, the strain on the stack becomes immense. Managing the persistent state of thousands of concurrent agents requires a level of networking and compute orchestration that few companies can manage without incurring massive overhead.

The challenge lies in the transition from transient inference to sustained “digital workers” that act as permanent members of a team. These agents must maintain context over hours or days, coordinating between various tools and datasets without human supervision. This operational complexity has become the new scarcity, limiting the ability of firms to scale their AI initiatives. Without a managed environment to handle this burden, the data center becomes a graveyard for pilot programs that were too resource-intensive to ever reach full-scale production.

Moving Beyond Raw Models to Managed Orchestration

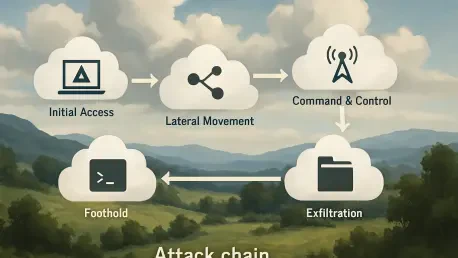

The transition from providing raw API access to offering a fully managed orchestration layer fundamentally alters the AI application stack. By centralizing critical functions like error recovery, authentication, and tool execution, a model provider can insert its platform directly into the heart of the business workflow. This allows for the creation of agents that are capable of multi-step workflows, such as processing insurance claims or managing complex software development cycles. Early adopters like Atlassian and Asana are already utilizing this to offload the heavy lifting of agent lifecycle management, effectively turning the AI into a reliable utility.

This shift toward managed environments allows for high-concurrency sessions that act less like a search engine and more like a functional department. Rather than building a unique sandbox for every agent, companies can lean on a standardized execution layer that ensures consistency across different use cases. Such a setup facilitates a “plug-and-play” approach to agentic workflows, where the underlying complexity of state persistence is handled by the platform. Consequently, the focus of the enterprise moves from “how do we run this?” to “what can this accomplish?”

Expert Perspectives on the Risks of Abstraction

Despite the clear efficiency gains, industry experts remain wary of the potential downsides that come with hiding such extreme complexity. Jason Andersen, an analyst at Moor Insights & Strategy, notes that as AI agents evolve into digital workers embedded in SaaS platforms, the industry must grapple with new standards for billing and compliance. When a service manages the execution, the transparency of resource usage can become obscured, creating challenges for procurement teams. Furthermore, there is the ever-present danger that over-reliance on a single provider’s managed stack could lead to a lack of flexibility in the future.

Holger Mueller of Constellation Research highlights that while abstraction speeds up the development cycle, it often results in significant vendor lock-in. Chief Information Officers must carefully evaluate whether the immediate speed of deployment is worth the potential loss of portability for their multi-agent flows. If a company builds its entire automation strategy on a proprietary managed infrastructure, moving those workflows to a competitor like AWS Bedrock or an on-premise solution becomes a Herculean task. Therefore, the strategic choice involves balancing the need for rapid innovation against the long-term sovereignty of the company’s digital assets.

Strategies for Transitioning to an Agentic Infrastructure

To successfully navigate this shift, enterprises should adopt a framework that prioritizes business outcomes over technical scaffolding. The first step involved identifying specific high-value workflows that required significant persistence and multi-tool coordination, such as automated customer support or intricate data analysis. By isolating these use cases, organizations determined exactly where a managed platform could provide the most relief from operational noise. The primary goal became the definition of clear task parameters, which allowed the managed system to handle the heavy lifting of sandboxing and state management without constant human oversight.

The transition also required a shift in mindset, where internal IT teams moved away from being infrastructure maintainers to becoming “agent architects.” This involved refining the behavioral prompts and safety boundaries of the agents to ensure they operated within corporate compliance standards. Companies that embraced this model successfully bypassed traditional deployment bottlenecks and moved straight to production-grade implementation. Ultimately, the focus on refining agent behavior rather than managing server logs allowed these organizations to extract tangible value from their AI investments faster than their competitors.