Matilda Bailey stands at the forefront of the modern digital infrastructure revolution, bringing a wealth of experience in networking and next-generation data center solutions. As a specialist who has witnessed the transition from simple cloud storage to the intense demands of the AI era, she provides a unique perspective on the physical and financial hurdles facing the industry today. In this discussion, we explore the stark reality that the vast majority of current facilities are ill-equipped for the future, the shifting landscape of infrastructure financing, and the critical execution gaps that prevent AI projects from reaching their full economic potential.

How should operators approach the transition from traditional air cooling to liquid-cooled environments while maintaining uptime for existing legacy systems, especially since less than 10% of current US data center inventory is capable of handling high-density AI loads?

The reality on the ground is quite startling when you realize that less than 10% of the US inventory is truly ready for the critical loads AI demands. For an operator, transitioning a legacy facility is like performing open-heart surgery while the patient is running a marathon; you have to integrate liquid cooling loops and high-density power distribution without tripping the breakers on the traditional air-cooled racks next door. This requires a surgical approach to physical modifications, often involving the reinforcement of floor weight capacities to handle heavier, liquid-cooled manifolds and the installation of specialized heat exchangers. We see intense activity around these power-dense environments because that is where the monetization is finally happening, but the physical constraints of older buildings—like ceiling heights and ductwork—often act as a hard ceiling on what can be achieved. It is a sensory experience of heat and noise, where the hum of traditional fans is being replaced by the silent, heavy flow of coolant in a race to keep up with GPU demands.

Lenders are increasingly tightening terms on infrastructure deployments, often favoring short-term bridge loans over permanent capital. How does this financial shift impact “time-to-revenue” strategies, and what steps can operators take to better synchronize power procurement with construction to prevent assets from sitting idle?

The financial friction we are seeing right now is a direct response to the uncertainty of long-term demand visibility, leading lenders to scrutinize utilization assumptions like never before. This shift toward short-term, high-yield bridge loans puts an immense amount of pressure on the “time-to-revenue” clock, as every day a facility stands empty is a day that expensive capital is being burned. To combat this, operators are having to become master orchestrators, synchronizing the arrival of multi-million dollar GPU clusters with the exact moment the local utility flips the switch on a new power substation. If these timelines slip even by a few weeks, the consequences are dire—you have idle assets that aren’t generating the revenue needed to refinance that high-cost debt into something more permanent. It is a high-stakes game of logistics where the supply chain, construction crews, and power companies must all move in a perfectly timed dance to ensure the facility is production-ready the moment the hardware is bolted in.

Only about 22% of AI initiatives currently meet their original ROI goals once they reach production. Beyond simple hardware access, what specific lifecycle controls and automated health checks are necessary to bridge this execution gap and provide leadership with more predictable economic outcomes?

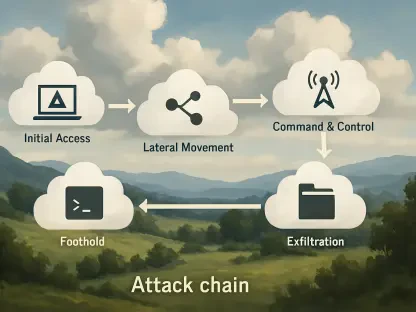

That 22.8% figure is a sobering reminder that simply throwing GPUs at a problem doesn’t guarantee a business victory; there is a persistent execution gap that consumes many projects. To bridge this, enterprises need to move beyond the “experimental” mindset and implement robust lifecycle controls that treat AI infrastructure as a living, breathing organism. This means deploying automated health checks that can detect a failing node before it crashes a massive training job, as well as granular observability tools that track how every dollar of compute is actually contributing to the bottom line. Leadership teams are no longer satisfied with vague promises of “innovation”—they want economics they can actually explain to a board of directors, with predictable scaling costs and guaranteed system stability. Without these automated guardrails, the complexity of production AI often overwhelms the original budget, leaving the project stranded in a sea of red ink.

Evaluation criteria for infrastructure are shifting from basic capacity and price toward stable performance under sustained demand. How can organizations better implement observability across different system layers, and what specific metrics best indicate that a platform is truly ready for business-critical scaling?

The conversation has matured rapidly over the last twelve months, moving from a desperate scramble for any available capacity to a sophisticated evaluation of how systems behave under the crushing weight of real-world demand. Organizations are now looking for “production readiness,” which means they need to see deep observability across the entire stack—from the thermal performance of the liquid cooling units down to the latency between individual GPU nodes. The best indicators of a platform’s maturity are no longer just price per kilowatt, but rather the stability of throughput during sustained workloads and the ability of the infrastructure to self-heal without manual intervention. Speed of innovation has become the primary metric, but that speed is only possible if the underlying platform doesn’t fall apart the moment you move from a controlled experiment to a live, business-critical environment. It’s about having a system that behaves predictably even when it’s being pushed to its absolute physical and digital limits.

Many enterprise leadership teams face significant trepidation due to limited infrastructure availability and intense competition for capacity. What should be the primary strategic priorities when choosing between retrofitting an existing data center or committing to a new, AI-optimized build?

There is a palpable sense of trepidation in the office of the CIO right now because they are caught between the scarcity of high-density space and the aggressive land-grab by hyperscalers. When choosing between a retrofit and a new build, the primary priority must be the long-term viability of the power density—a retrofit might get you online faster, but if it can’t support the next generation of liquid-cooled racks, you’ll be back at square one in twenty-four months. New builds are often the safer bet for pure-play GPU deployments because they are optimized for ultra-high density from the ground up, avoiding the structural and thermal compromises of older facilities. However, given the intense competition for capacity, many are forced to secure whatever space they can find, making the strategic choice more about “where can I get power?” rather than “which building is better?” Ultimately, the decision must be tied to execution risk; if you can’t guarantee production stability in a retrofitted site, the “savings” of using existing space will evaporate the first time the system overheats.

What is your forecast for the AI data center market?

The market is entering a phase of extreme bifurcation where we will see a massive divide between legacy facilities and a new elite class of AI-optimized infrastructure. We should expect to see more mega-deals, similar to the $21 billion agreement between Meta and CoreWeave, as the industry’s biggest players move to lock up every available megawatt of high-density capacity for the next decade. As the “execution gap” narrows and more companies figure out how to hit their ROI targets, the demand for liquid-cooled, production-ready space will far outstrip the supply that can be retrofitted. Consequently, the definition of a viable data center will be rewritten—it won’t just be about square footage or cooling anymore, but about the seamless integration of finance, power, and automated operations. The winners will be those who can navigate the tightening credit markets to build facilities that don’t just house servers, but act as high-performance engines for the next generation of business intelligence.