The Evolution of Infrastructure in the Age of Artificial Intelligence

The global landscape for digital architecture is undergoing a seismic transformation as the specialized requirements of artificial intelligence demand a more surgical approach to physical infrastructure than ever before. For decades, the industry operated under a universal mandate: every bit of data was treated with the same urgency, leading to massive, overbuilt facilities that prioritized absolute redundancy above all else. This “one-size-fits-all” philosophy was suitable for a world centered on static web pages and simple databases, but it has become an obstacle in the current high-compute environment. Today, the rapid ascent of generative models is forcing developers to rethink the fundamental blueprint of the data center, moving away from rigid designs toward a concept known as precision resilience.

This shift is not merely a technical adjustment; it represents a complete re-evaluation of how capital and energy are deployed. As of 2026, the primary goal for operators is to align the physical robustness of a building with the specific needs of the software it houses. By understanding that a temporary pause in a training model is far less critical than a failure in a real-time medical diagnostic tool, the industry can optimize resource allocation. This article explores how this move toward intentionality allows for faster deployment cycles and significantly better energy efficiency, ensuring that the infrastructure of the future can keep pace with the relentless speed of algorithmic innovation.

From Aviation Standards to the Transportation Network Model

In the early days of the digital revolution, data center engineering was governed by a philosophy similar to commercial aviation, where safety and redundancy were the only metrics that mattered. Just as an aircraft is equipped with multiple backup systems to ensure it remains airborne during a component failure, legacy data centers were designed with extensive layers of uninterruptible power supplies, backup generators, and duplicated cooling circuits. This “five-nines” approach—targeting 99.999% availability—was essential when facilities primarily supported mission-critical services like global banking or emergency response systems. In those contexts, even a momentary outage could trigger cascading financial or social consequences, making the high cost of overengineering a necessary insurance policy.

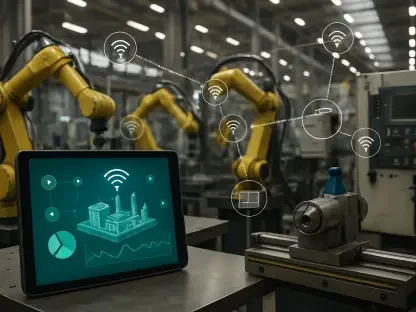

However, the industry is now transitioning away from this aviation metaphor toward a more nuanced “transportation network” model. In a modern logistics network, different goods move via different modes based on their urgency and value; a cargo ship carrying raw materials does not require the same speed or protection as a courier transporting life-saving medicine. Similarly, data center operators are beginning to recognize that different workloads have varying degrees of sensitivity to downtime. This realization has opened the door for a more diverse ecosystem of facilities, ranging from high-density training hubs to hyper-resilient edge nodes, allowing for a more logical distribution of hardware and power resources across the global network.

Precision Resilience and the New Design Philosophy

The Divergence of AI Training and Inference Workloads

A fundamental driver of this architectural shift is the clear distinction between training and inference tasks. AI training is a massive, energy-intensive batch process that involves running trillions of parameters through GPUs over weeks or months. Because these processes utilize “checkpointing”—a method where the model’s progress is saved at regular intervals—the cost of a rare power interruption is manageable. If a training cluster goes offline for a brief period, work can often be resumed from the last saved state without losing the entire project. This tolerance for minor disruptions allows developers to prioritize energy-optimized designs that maximize compute power per square foot rather than spending billions on redundant electrical gear that may never be utilized.

In stark contrast, AI inference—the stage where a model actually interacts with a user to provide an answer or perform a task—must be low-latency and highly available. When a user asks a chatbot a question or a self-driving car processes sensor data, the system must respond instantly. Any downtime in an inference environment directly impacts the user experience and can lead to immediate service failures. By separating these two functions, data center designers can build leaner, more efficient “training campuses” in remote areas while reserving expensive, high-redundancy facilities in urban centers for the inference workloads that truly require them.

Addressing the Economic and Operational Costs of Overengineering

The current market is defined by a fierce competition for power and a strained global supply chain, making the legacy habit of overengineering a major financial liability. Building every data center to the highest tier of redundancy locks up massive amounts of capital in hardware, such as oversized battery arrays and spare transformers, which often sit idle. This misallocation of funds not only drives up the cost of compute but also significantly slows the speed to market. In an era where being first to deploy a new model can mean the difference between market leadership and obsolescence, the time wasted installing unnecessary backup systems is a cost many companies can no longer afford.

Industry leaders now argue that every watt of power delivered to a site must be used with surgical precision. By shifting the focus from redundant hardware to maximized power density and liquid cooling efficiency, operators can pack more GPUs into the same physical footprint. This transition to precision design also simplifies the operational complexity of the facility. Fewer backup components mean fewer points of failure to maintain and fewer potential bottlenecks during the construction phase. Consequently, the industry is seeing a move toward “right-sized” infrastructure that provides exactly the amount of resilience required for the specific workload, no more and no less.

Regional Variations and the Impact of Power Proximity

Geography is becoming a primary tool in the quest for precision design, as the location of a facility now dictates its architectural priorities. While inference sites remain anchored near major population hubs to minimize the physical distance data must travel, massive training clusters are migrating to remote regions where power is cheaper and land is abundant. These rural campuses can afford to experiment with different uptime standards in exchange for access to renewable energy sources like wind or hydro. In these environments, the goal is scale; the buildings are designed as lightweight shells optimized for airflow and high-density racking, rather than heavy-duty fortresses built to withstand every possible contingency.

Furthermore, these regional variations are driving innovations in thermal management. Traditional air-cooling systems are proving insufficient for the intense heat generated by modern AI hardware, leading to a widespread adoption of liquid cooling technologies. In remote training hubs, designers can implement specialized cooling systems that take advantage of local climates, further reducing the energy overhead. This geographic specialization ensures that the global data center fleet is no longer a collection of identical boxes, but a tailored network of specialized assets that capitalize on local advantages to meet the global demand for intelligence.

Standardization and the Future of Modular Construction

To meet the staggering demand for new capacity, the industry is rapidly moving toward a future defined by modularity and standardized reference designs. The traditional method of building bespoke, one-off facilities is too slow and unpredictable for the current pace of AI development. Instead, operators are utilizing prefabricated “building blocks” that are manufactured in controlled factory environments and shipped to the site for rapid assembly. This shift provides better cost predictability and ensures that quality standards are maintained regardless of the local labor market. By adopting a modular mindset, companies can scale their infrastructure in increments, adding power and cooling capacity only as the workload grows.

This trend toward standardization also makes facilities more adaptable to future technological shifts. As GPU architectures evolve at a breakneck pace, the physical infrastructure must be able to keep up. Modular designs allow for faster retrofits and upgrades, ensuring that a building commissioned today does not become a bottleneck for the chips of tomorrow. Looking ahead, the focus will remain on creating a flexible physical layer that can support multiple generations of hardware. This approach effectively “future-proofs” the investment, allowing the data center to evolve alongside the software it supports rather than remaining a static and eventually obsolete asset.

Strategies for Navigating the New Infrastructure Landscape

For organizations looking to thrive in this new environment, the path forward requires a strategy of deep intentionality and workload auditing. Success in the current market depends on the ability to categorize applications by their specific needs rather than applying a blanket standard to the entire portfolio. Businesses should move toward a hybrid model, placing their massive training operations in high-density, cost-effective campuses while reserving high-redundancy, low-latency sites for customer-facing services. Additionally, embracing standardized modular components will be essential for meeting deployment timelines and managing the escalating costs of construction and hardware.

It is also vital for stakeholders to engage in closer collaboration with utility providers and local governments to secure power in strategic locations. As the competition for electricity intensifies, the ability to design facilities that are “grid-friendly”—capable of adjusting their load based on supply—will become a significant competitive advantage. Professionals should prioritize the implementation of liquid cooling and high-density power distribution as baseline requirements for all new projects. By treating resilience as a variable design choice rather than an immovable requirement, companies can maximize their return on investment and build a digital foundation that is both agile and economically sustainable.

Conclusion: Flexibility as the Core Value of Modern Design

The transition toward precision data center design reflected a significant maturation within the digital infrastructure industry. Designers and operators successfully moved away from the era of monolithic, high-redundancy fortresses and instead embraced a diverse ecosystem of specialized facilities. This evolution proved essential for overcoming the critical power constraints and supply chain hurdles that defined the middle of the decade. By aligning physical infrastructure with the actual economic and technical requirements of AI workloads, the industry ensured that resource allocation remained efficient and purposeful.

The significance of this shift resided in the newfound ability to treat resilience as a deliberate strategic choice. High-density training hubs and resilient inference nodes became the two pillars of a global network that prioritized flexibility over rigid traditionalism. This architectural revolution allowed the data center industry to remain the robust, efficient backbone of the global technological landscape. Ultimately, the adoption of purpose-built design and modular construction provided the necessary agility to support the next generation of digital growth, proving that the most valuable asset in the age of intelligence was the willingness to adapt.