The sheer volume of digital intelligence currently being generated across the globe has officially flipped the script on how we design and deploy semiconductor technology. For several years, the industry was obsessed with the “training” phase—a high-stakes, resource-heavy marathon of teaching large language models using massive datasets. This era belonged to the high-end, general-purpose Graphics Processing Unit (GPU), which acted as the foundational engine for every major breakthrough in generative AI. However, as we move deeper into the commercialization of these technologies, the center of gravity has shifted toward “inference,” the process of running these pre-trained models to deliver real-time results to end users.

This transition marks a critical maturation point for the global economy. As companies move from experimental research to full-scale deployment, the hardware requirements are becoming more granular and demanding. The focus is no longer just on raw computational muscle; it is on how efficiently a model can generate text, recognize a face, or navigate an autonomous vehicle in a split second. Consequently, the semiconductor landscape is diversifying, giving rise to specialized silicon designed to handle the specific burdens of execution rather than the broad requirements of education.

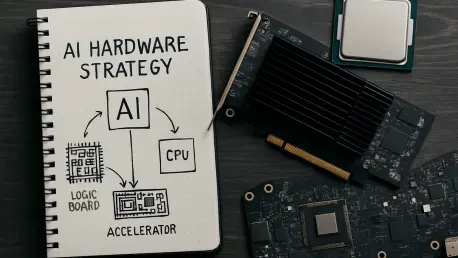

The Strategic Pivot Toward Real-World AI Deployment

The current semiconductor landscape is defined by a move away from the “Swiss Army knife” approach of the early AI boom. During the initial surge of development, the priority was to build the most capable foundational models possible, regardless of the energy or financial cost. This created a market dominated by a few key players who could supply the massive clusters needed for training. Today, however, the industry views training as a necessary “cost center”—the price of entry—while inference has emerged as the true “profit center” where revenue is actually generated through user interaction.

Understanding this historical trajectory reveals why the hardware market is no longer a monolithic entity. As we look at the deployment of AI in 2026 and toward 2028, the economic logic is reversing. If a company spends millions to train a model but cannot serve it to millions of customers efficiently, the investment fails. This reality is forcing a shift toward hardware that prioritizes lower latency and improved cost-efficiency, ensuring that the delivery of AI services remains a sustainable business model rather than a drain on corporate resources.

From Experimental Foundations to Commercial Reality

In the early stages of this technological revolution, the focus was almost exclusively on the training phase. Developers needed immense power to process trillions of data points over months, which necessitated heavy upfront investments in energy and compute infrastructure. This era established the GPU as the gold standard because its flexibility allowed researchers to pivot as model architectures evolved. There was little room for specialization when the “right” way to build a neural network was still a moving target.

The background factors that shaped that era have now given way to a more stable technological environment. Now that the primary architectures for large language models are better understood, the industry can afford to trade some of that flexibility for raw efficiency. We have reached a tipping point where the volume of inference tasks is beginning to dwarf training workloads. This evolution explains the current push for specialized solutions that can strip away the extra features required for training to focus solely on the high-speed execution required for customer-facing applications.

The Architectural Evolution of AI Silicon

The Economic Imperative: Latency and Throughput

A critical aspect of the current hardware evolution is the distinct financial role that inference plays compared to its training predecessor. In the execution phase, the priorities are defined by the end-user experience. If an AI-driven customer service bot takes three seconds to respond, it loses its immediate value, which directly impacts a company’s bottom line. Therefore, the total cost of ownership (TCO) has become the most important metric for enterprise leaders. Even a 5% improvement in power efficiency can save a hyperscale data center millions of dollars over the lifecycle of a server rack.

The Rise of the XPU and Specialized ASICs

Building upon the need for localized efficiency, recent market data indicates a significant diversification of the compute market. While GPUs still command a large share of data center spending, the growth trajectory for the next few years suggests a massive surge in the adoption of XPUs. These processors, which include custom accelerators and Application-Specific Integrated Circuits (ASICs), are growing at a rate that outpaces traditional CPUs and GPUs. This trend highlights a strategic move toward “lean” silicon that eliminates the architectural baggage of training-optimized chips to provide faster responses at a much lower power cost.

Overcoming the Memory Wall and Networking Bottlenecks

Beyond the processor itself, the industry is grappling with the “memory wall”—the physical limit on how fast data can move between the chip and its storage. This bottleneck is especially prevalent in inference, where data must be accessed and processed in real-time. Innovation is now coming from startups developing novel memory architectures that outperform standard high-bandwidth memory at a lower price point. Furthermore, regional hubs like South Korea are producing a new generation of Neural Processing Units (NPUs) that rethink how data flows through a system, addressing common misunderstandings that AI performance is solely about the speed of the chip.

Future Trends in the Inference Landscape

The landscape for the coming years will be shaped by the move toward vertical integration among cloud service providers. Hyperscalers are increasingly developing their own proprietary silicon to reduce their reliance on external vendors and to offer better price-performance ratios to their clients. This “in-house” approach allows for a level of software-hardware co-design that was previously impossible. We are also seeing a “pairing” strategy become the standard, where high-end GPUs are kept for the heaviest training tasks while a fleet of specialized chips manages the daily execution of AI across global networks.

Regulatory and environmental constraints are also playing a major role in this shift. As power-hungry GPU clusters reach the limits of what standard electrical grids can support, there is an urgent need for “green” inference chips. This power budget bottleneck is accelerating the adoption of silicon that can operate within the cooling and energy limits of existing infrastructure. We should expect to see significant consolidation in the market as established giants acquire agile startups to integrate specialized “software-defined hardware” capabilities into their existing product lines, ensuring they can handle the specific data flows of modern AI.

Strategic Recommendations for an Inference-First World

The analysis of the current market highlights several takeaways for businesses navigating this transition. It is essential to prioritize the total cost of ownership over raw peak performance when selecting hardware for production environments. For organizations moving AI applications into the hands of users, the focus should be on “inference-centric” ROI. This means identifying hardware that delivers the fastest responses at the lowest possible energy cost. Companies should diversify their hardware portfolios rather than relying on a “one-size-fits-all” approach that may lead to over-provisioning and wasted capital.

Moreover, the programmability of hardware remains a vital safeguard. While specialized ASICs offer the highest efficiency, the rapid pace of model evolution means that software-defined flexibility is still necessary to avoid obsolescence. For businesses operating at the edge, such as retail chains or medical facilities, the best path forward involves investing in hardware that fits within existing infrastructure constraints. Avoiding the need for expensive facility upgrades, like liquid cooling systems, allows for a much faster and more profitable scale-up of AI operations across distributed locations.

The Maturation of AI Hardware

The overarching trend in the AI chip industry proved to be a transition from a centralized, GPU-heavy model to a diversified and highly efficient ecosystem. While the versatility of the general-purpose GPU maintained its prestige for complex research, it was no longer the only viable solution for the massive volume of inference tasks that defined the market. This shift represented the true maturation of the industry, moving away from high-level speculation and toward the same cold, hard metrics that govern any other business tool: cost, efficiency, and reliability.

Ultimately, the significance of this transition lay in its ability to democratize high-performance AI. As these technologies became a standard component of enterprise operations, the hardware powering them had to become capable of scaling sustainably. The winners of this era were those who successfully delivered inference-centric value, allowing AI to move out of the massive, specialized data center and into the fabric of daily life. The hardware strategy for the future was not built on raw power alone, but on the intelligent, efficient execution of the models that now define the global economy.