The modern data center has reached a thermal paradox where the very water used to prevent silicon from melting is now warmer than the average backyard swimming pool. As artificial intelligence continues to expand its footprint across the global economy, the infrastructure supporting these massive computations is undergoing a radical re-engineering. The transition away from traditional air-conditioned rooms toward high-temperature liquid cooling is no longer a choice for the elite few but a fundamental requirement for the “AI factories” currently under construction. This shift represents a departure from decades of cooling philosophy, moving the industry toward a standard where 45 degrees Celsius (113 degrees Fahrenheit) is the new baseline for operational efficiency.

The 113-Degree Tipping Point: Cooling Tomorrow’s AI with Hot Water

The arrival of Nvidia’s Vera Rubin architecture has solidified a trend that was once considered a laboratory curiosity. By designing processors that can thrive while being cooled by 45-degree Celsius water, the industry has crossed a critical thermal threshold. This specific temperature is significant because it sits comfortably above the ambient air temperature in the majority of global climates. When the cooling medium is hotter than the outside air, the need for energy-intensive mechanical refrigeration—the massive chillers that have defined data center skylines for thirty years—virtually disappears.

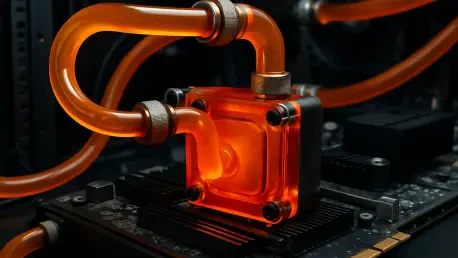

This transition transforms the data center from a fragile environment requiring constant refrigeration into a robust industrial facility. The “AI Factory” model demands a density of computation that makes traditional air-cooled racks look like relics of a simpler era. In these new environments, the goal is no longer to provide a comfortable “meat locker” atmosphere for the hardware but to move heat as fast as the chips can generate it. Utilizing water at temperatures that would be “hot” to a human touch allows for a much more efficient transfer of energy, ensuring that the most advanced processors in the world do not throttle their performance during peak workloads.

As these facilities scale, the reliance on high-temperature water fundamentally changes the economics of data center site selection. Locations that were previously considered too warm for traditional data centers are now viable because the cooling systems do not need to fight against the local climate. Instead, they use the temperature differential between the 45-degree internal loop and the outdoor environment to shed heat naturally. This shift is the cornerstone of a broader movement to make AI compute sustainable, reliable, and geographically diverse.

Why the Chill is Vanishing: The Drivers Behind the Thermal Pivot

The primary catalyst for this cooling evolution is the sheer physical limitation of air as a heat transfer medium. Traditional fans and air-conditioning units are struggling to cope with rack densities that have increased by 30 times over the last few years. While a standard enterprise server rack might have drawn 5 to 10 kilowatts a decade ago, modern AI clusters frequently exceed 100 kilowatts per rack. At these levels, the volume of air required to move the heat would need to travel at speeds approaching a hurricane, which is neither practical for the hardware nor sustainable for the energy grid.

Liquid cooling is experiencing a renaissance that traces its lineage back to the IBM mainframes of the 1960s, but with a modern twist toward standardization. In the past, water cooling was a bespoke, expensive solution reserved for the world’s most powerful supercomputers. Today, the complexity of managing these systems has been simplified by modular infrastructure that can be deployed at scale. The transition is driven by the realization that liquid is roughly 3,000 times more effective at carrying heat than air, allowing for a compact footprint that maximizes the expensive real estate of the data center floor.

Environmental mandates and the escalating cost of resources are also forcing the hand of data center operators. Traditional evaporative cooling systems can consume millions of gallons of water per day, a practice that has become socially and politically untenable in many regions. High-temperature liquid cooling offers a path toward “net-zero” water usage by utilizing closed-loop systems that reject heat through radiators rather than evaporation. This dual crisis of energy consumption and water scarcity has turned a niche engineering preference into a mandatory global infrastructure standard.

The Mechanics of the High-Temperature Revolution

The most visible sign of this revolution is the disappearance of the mechanical chiller from the data center equipment yard. By embracing high-temperature water, facilities can utilize “free cooling” or ambient air economization throughout the entire year. Heat exchangers on the roof of the building take the 45-degree water coming from the server racks and use large, energy-efficient fans to pass outdoor air over the coils. This process eliminates the compressor-based refrigeration cycles that typically account for 30 to 40 percent of a data center’s total energy bill, drastically improving the Power Usage Effectiveness (PUE) of the facility.

Furthermore, the heat being removed from the chips is no longer treated as a waste product to be discarded but as a commodity with tangible value. When water leaves a server rack at 50 or 60 degrees Celsius, it contains high-grade thermal energy that can be easily repurposed. Across Europe and parts of North America, data centers are now being integrated into district heating systems, providing warmth to nearby campus buildings, residential apartments, and public swimming facilities. The Leibniz Supercomputing Center provides a definitive case study in this circular energy economy, demonstrating how repurposing exhaust heat can save over $1.25 million in annual utility costs while reducing the facility’s carbon footprint.

Innovation is also pushing toward the frontier of two-phase dielectric cooling, where liquids are designed to boil at the surface of the chip. This phase-change process absorbs significantly more energy than simply heating up a liquid, allowing for even higher thermal thresholds. Systems operating at 60-degree Celsius thresholds offer an unexpected secondary benefit: the heat is high enough to naturally control microbial growth within the plumbing. This reduces the need for harsh chemical treatments and simplifies the long-term maintenance of the liquid loops, all while using pumps that require significantly less power than traditional water-based configurations.

Industry Perspectives and the Economic Reality of Liquid Adoption

Expert teams at organizations like Lenovo have spent years refining their “Neptune” liquid cooling technology to prove that high-temperature systems do not compromise hardware longevity. The consensus among engineers is that as long as the temperature is consistent and the flow rate is managed, chips remain well within their safe operating envelopes. In fact, removing the vibration and mechanical stress caused by high-speed cooling fans can actually improve the reliability of the servers over time. This technological maturity is giving confidence to conservative enterprise customers who were previously hesitant to bring liquids into their server rooms.

From a financial perspective, the shift follows a “higher upfront, lower long-term” investment model. While the initial capital expenditure for liquid-to-chip plumbing and specialized racks is higher than traditional air-cooled setups, the operational savings are undeniable. Direct-to-chip cooling systems typically deliver a 31 percent reduction in total power consumption by eliminating fans and chillers. Market projections indicate that the cooling infrastructure sector will leap from a $3 billion niche to a $7 billion industry standard by 2029, as the Return on Investment (ROI) for these systems is now frequently achieved within a three-year window.

The alignment of the vendor ecosystem is perhaps the strongest indicator of this shift’s permanence. Major infrastructure providers such as SuperMicro, Vertiv, and Schneider Electric have completely re-engineered their product lines to support the 45-degree Celsius standard. This alignment ensures that operators have access to a standardized supply chain of connectors, manifolds, and coolant distribution units (CDUs). As these components become commoditized, the “liquid tax” that once made these systems prohibitively expensive is steadily evaporating, making high-temperature cooling the default choice for any facility housing modern AI accelerators.

Navigating the Transition: Strategies for Implementation and Oversight

Implementing these systems requires a thoughtful approach to the human and operational elements of the data center. A facility running a 45-degree Celsius primary cooling loop will inevitably experience higher ambient floor temperatures, which can reach 35 degrees Celsius (95 degrees Fahrenheit) or higher. This creates a “hot to the touch” environment that necessitates new safety protocols and perhaps specialized clothing for technicians. Bridging the skills gap is another critical hurdle, as traditional IT staff must be trained in the maintenance of complex plumbing, leak detection systems, and the chemistry of dielectric fluids to ensure the integrity of the mission-critical hardware.

Infrastructure planning now involves a difficult choice between retrofitting legacy sites and building greenfield facilities. While some existing data centers can be modified to support a hybrid of air and liquid cooling, the structural and power requirements of high-density AI clusters often favor new builds. These dedicated facilities are designed with the massive exterior radiator arrays and reinforced flooring needed to handle the weight of liquid-filled racks. For many organizations, the strategy involves identifying specific “AI zones” within their portfolio that can be fully transitioned to high-temperature standards while leaving traditional workloads in legacy air-cooled halls.

Standardization remains the final frontier for future-proofing these investments. As the industry moves toward 2029, the establishment of open frameworks for quick-connect couplings and fluid specifications will be essential to prevent vendor lock-in. Facility operators are increasingly focused on the break-even point, balancing the initial costs against the long-term gains in density and sustainability. The transition to high-temperature liquid cooling proved to be a necessary evolution in the face of the AI revolution, fundamentally altering how the world perceives the relationship between massive computation and the physical environment. The industry recognized that the path to true digital intelligence was not through refrigeration, but through the sophisticated management of heat itself. This realization led to the decommissioning of legacy cooling systems in favor of closed-loop architectures that supported the next generation of global innovation.