The modern digital economy has reached a threshold where the heat generated by massive artificial intelligence clusters threatens to outpace the electrical capacity of traditional urban infrastructure. This thermal bottleneck forces engineers to rethink the geographic placement of the world’s most powerful computers, shifting the focus from proximity to users toward proximity to naturally cold environments. In these frigid regions, such as the Arctic Circle or Northern Europe, the ambient air provides a permanent, free resource for dissipating the gigawatts of heat produced by high-performance hardware. By leveraging these unique climatic conditions, operators are finding they can scale AI training models more aggressively while simultaneously reducing their environmental footprint. This shift is not merely a trend for 2026 but a fundamental restructuring of how the global internet backbone is constructed to handle the relentless surge of neural network processing.

Environmental Synergy: The Power of Free Cooling

The core technological advantage of placing data centers in northern latitudes lies in the concept of free cooling, which eliminates the need for energy-intensive mechanical chillers. In a standard facility located in a temperate or warm climate, a massive percentage of total power consumption is diverted away from computation just to keep the server racks from melting. By contrast, a facility in Sweden or Finland can simply filter and circulate the outside air, which remains consistently cold year-round, to maintain optimal operating temperatures for high-density GPU clusters. This process significantly lowers the Power Usage Effectiveness ratio, bringing it closer to the ideal 1.0 mark where every watt of electricity is dedicated to processing data. As global power grids become increasingly strained by the transition to electric vehicles and home heating, this ability to operate with minimal cooling overhead provides a critical safety valve for the continued expansion of the tech sector.

Beyond the atmospheric benefits, countries like Iceland and Norway offer a unique energy profile that combines natural cooling with nearly 100 percent renewable power sources. Iceland, for instance, utilizes its vast geothermal reservoirs to provide a steady baseload of electricity that is unaffected by the fluctuations of wind or solar availability. Similarly, Norway’s extensive hydroelectric networks provide the low-cost, high-capacity energy needed to power the massive cooling fans and liquid-to-air heat exchangers used in modern hyperscale facilities. This synergy between climate and geography allows companies like Green Mountain and Verne Global to market zero-carbon computing services to enterprises under heavy pressure to meet sustainability targets. By locating high-intensity workloads in these regions, the industry is proving that the massive energy demands of modern AI do not necessarily have to result in a massive carbon footprint, provided the location is chosen with strategic foresight.

Economic Realities: Balancing Operational Costs and Latency

The financial justification for moving data centers to the Arctic is anchored in the sheer scale of utility savings realized when traditional cooling infrastructure is removed from the equation. For a one-gigawatt facility, operating in a region that is thirty degrees cooler than a major tech hub like Texas can result in savings exceeding $150 million annually in electricity costs. These funds are frequently reinvested into the next generation of silicon, allowing operators to refresh their hardware cycles faster than competitors stuck with high overhead costs. Furthermore, the reduction in water usage is becoming a primary economic driver as many municipalities begin to levy heavy water taxes on facilities that evaporate millions of gallons daily for cooling. By avoiding the need for evaporative towers, cold-climate centers insulate themselves from the regulatory and ethical risks associated with water scarcity in a warming world, ensuring long-term operational stability.

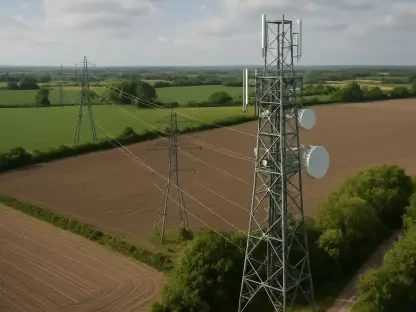

While the cost savings are substantial, the transition to remote northern hubs introduces complex logistical challenges, particularly regarding data latency and physical infrastructure deployment. The physical distance between a server in the Arctic and a user in London or New York creates a delay that makes these locations unsuitable for latency-sensitive applications like real-time gaming or high-frequency stock trading. Building these facilities also requires immense upfront capital to lay deep-sea fiber-optic cables and establish behind-the-meter power connections in areas that may lack a robust existing grid. Heavy snowfall and extreme icing conditions can further complicate the delivery of sensitive IT equipment and increase the difficulty of routine hardware maintenance during the long winter months. Consequently, engineers must carefully weigh the trade-offs between the massive efficiency gains of free cooling and the increased complexity of managing a distributed network.

Strategic Diversification: The Future of Global Compute Distribution

As we progress through 2026, a clear hierarchy is emerging in the way data centers are utilized based on their geographic location and the nature of the workloads they handle. Cold-climate regions are increasingly becoming the specialized domain for batch processing and the training of large language models, tasks that require months of sustained power but are not sensitive to millisecond delays. Meanwhile, urban edge data centers continue to handle the inference side of AI, which includes the immediate responses to user queries where proximity to the end-user remains the most critical factor. This two-tiered approach allows the digital economy to scale without overwhelming the infrastructure of major cities, effectively offloading the most energy-intensive tasks to the frontier. This strategic split ensures that the massive compute requirements of AI development do not compete directly with residential energy needs, creating a more balanced global digital ecosystem for the future.

The migration toward the coldest corners of the planet proved to be a pivotal shift in how the technology industry managed its explosive growth during the mid-2020s. By aligning server placement with the natural thermal advantages of the Arctic and Northern Europe, operators successfully bypassed the efficiency limits that had previously hindered the expansion of massive AI clusters. This evolution necessitated a move toward more resilient hardware designs and the development of specialized training hubs that functioned independently of urban constraints. Moving forward, the industry demonstrated that the path to sustainable high-performance computing required a departure from traditional building models toward an integrated approach that treated the environment as a functional component of the machine. The strategic lessons learned from these northern implementations provided a blueprint for future infrastructure, ensuring that global digital capacity expanded without compromising the ecological health of the world.