Expert Matilda Bailey is a distinguished networking specialist who has dedicated her career to the evolution of digital infrastructure, with a particular focus on the intersection of cellular, wireless, and next-generation solutions. As data centers transition from isolated private assets into the backbone of national security and the global economy, her insights provide a critical bridge between traditional IT and the complex realities of modern geopolitical risk. In this discussion, we explore the systemic vulnerabilities facing hyperscale facilities, the delicate synchronization between massive AI clusters and the electrical grid, and the emerging threat models that target the dependencies surrounding our digital world rather than just the facilities themselves.

Recent drone strikes on regional infrastructure have demonstrated that data centers can be disrupted without a direct breach of the facility. How should operators rethink physical security to protect external power and connectivity, and what specific technical redundancies are necessary to maintain uptime during such kinetic attacks?

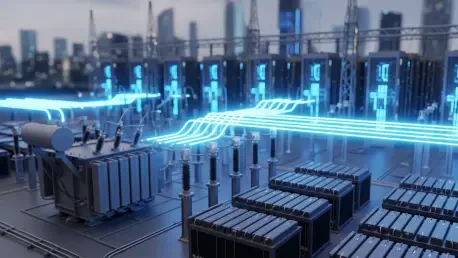

The recent strikes in the Middle East, particularly those affecting facilities tied to AWS in the UAE and Bahrain, prove that the facility wall is no longer the definitive edge of the security perimeter. We are seeing a shift where digital infrastructure is no longer insulated from geopolitical instability, and operators must realize that their greatest vulnerabilities often lie hundreds of yards away at a substation or along a fiber corridor. To counter this, we need to move beyond 20th-century models of “fences and guards” and invest in distributed redundancy that assumes the local grid or primary connectivity will fail. This means implementing multi-source power feeds from geographically diverse substations and creating mesh-networked fiber routes that do not share the same physical trenches. It’s about ensuring that even if supporting infrastructure is degraded by a kinetic event, the facility has enough local “islanding” capability—through massive on-site energy storage and satellite backhaul—to remain operational while the primary systems are restored.

Large-scale AI campuses now function as high-frequency loads that can trigger grid-level sympathetic tripping. What operational protocols can prevent these facilities from destabilizing regional power systems, and how can engineers better manage the synchronization between massive compute clusters and the electrical grid?

Hyperscale campuses are now operating at an industrial scale measured in hundreds of megawatts and even multiple gigawatts, which creates a “self-preservation paradox.” When a facility experiences a minor disturbance and initiates a protective shutdown to save its high-density GPUs, it can instantly shed a massive load, causing a “sympathetic trip” that destabilizes the entire regional power system. To prevent this, engineers must implement sophisticated load-shedding protocols that communicate in real-time with utility providers to “ramp down” rather than “snap off.” We need a tighter synchronization between the compute cluster’s power draw and the grid’s frequency, essentially treating the data center as a dynamic participant in the grid rather than a passive consumer. This requires a shift in operational philosophy where the health of the regional energy ecosystem is weighted as heavily as the uptime of the individual training job.

Traditional perimeter defenses often fail to monitor the massive east-west traffic common in AI training environments. What architectural controls are required to detect lateral movement within a cluster, and how do you secure sensitive assets like model weights against sophisticated nation-state adversaries?

In modern AI environments, the sheer volume of internal traffic—what we call east-west traffic—renders traditional “north-south” perimeter firewalls almost blind to what is happening inside the cluster. When you have thousands of GPUs tightly synchronized, a nation-state adversary who gains a foothold can move laterally with ease to target “crown jewel” assets like model weights. To defend against this, we must implement zero-trust architectural controls at the micro-segmentation level, treating every node within the cluster as a potential threat. This involves using hardware-based root of trust and encrypted interconnects that ensure data moving between GPUs is verified and isolated. We have to move toward a model where internal visibility is as granular as external monitoring, ensuring that any anomalous lateral movement is flagged and quarantined before it can reach the core model architecture.

Beyond hardware like GPUs and transformers, subtle vulnerabilities exist in the supply chain for cooling fluids and rare earth materials. What are the long-term indicators of passive sabotage in these components, and what step-by-step auditing processes should firms implement to ensure the integrity of their sub-tier suppliers?

We are facing what some call a “supply cliff,” where the concentration of dependency on a few global suppliers creates a massive opening for passive sabotage. This type of threat doesn’t look like a broken server; it looks like a cooling fluid that degrades 5% faster than specified or rare earth components with microscopic impurities that lead to hardware failure after six months of high-intensity use. To combat this, firms must implement a rigorous “cradle-to-gate” auditing process that includes chemical analysis of fluids and stress-testing of components under extreme AI workloads before they are deployed. Operators need to move away from just-in-time procurement and toward deep-tier visibility, where they are auditing the third and fourth-tier suppliers who provide the raw materials. It is a slow, methodical game of quality assurance that requires constant vigilance over the chemical and physical integrity of every asset entering the data center.

Oversight of digital infrastructure is currently fragmented across private operators, utility providers, and government regulators. How can these entities create a unified security posture that covers the entire ecosystem, and what specific metrics should they use to measure the resilience of the systems surrounding the data center?

Currently, responsibility is siloed: the operator cares about the building, the utility cares about the wires, and the government cares about the national impact, but no one has a complete view of the system’s health. We need a unified security posture that treats these entities as a single “critical infrastructure stack,” sharing threat intelligence in real-time across both cyber and physical domains. A key metric for this resilience should be “Time to Recovery of Systemic Dependency”—how quickly can a facility regain power or connectivity if an external node is destroyed? We should also measure “Cross-Sector Cascading Risk,” which quantifies how a failure in one data center impacts regional logistics, finance, or emergency services. Only by aligning these metrics can we move away from fragmented oversight and toward a resilient, integrated ecosystem.

Modern AI systems are increasingly vulnerable to non-traditional attack paths like data poisoning and prompt injection. How do these cyber-physical risks change the way you design trust boundaries, and what training or anecdotes can you share regarding the difficulty of defending integrated AI environments?

The integration of AI into critical infrastructure means that a digital exploit like data poisoning can have a physical consequence, such as mismanaging a cooling system or disrupting a power flow. This forces us to redraw trust boundaries not just around the code, but around the data inputs themselves, ensuring that the information used to train or prompt an AI is as secure as the hardware it runs on. I’ve seen environments where developers were so focused on the compute speed that they left open “backdoors” for data ingestion that were easily manipulated, highlighting how difficult it is to defend a system that is designed for maximum openness and speed. Defending these environments requires a multidisciplinary approach where software engineers, hardware specialists, and security professionals are all trained to recognize that an AI’s “logic” is just as susceptible to tampering as a physical lock.

What is your forecast for data center security?

I predict that over the next five years, we will see a mandatory “nationalization” of security standards for data centers, where they are officially designated as critical national infrastructure on par with nuclear power plants or water systems. The days of treating a hyperscale campus as a private warehouse are over; we will see the emergence of “security-first” hubs where the facility is built into hardened underground bunkers or decommissioned mines to protect against kinetic drone strikes and electromagnetic interference. As AI becomes the engine of our economy, the physical and digital perimeters will fully merge, and “uptime” will no longer be measured by the individual facility’s performance, but by the facility’s ability to remain an island of stability in a volatile geopolitical ocean. Operators who fail to account for the systemic risks surrounding their walls will find themselves obsolete in an era where infrastructure is the primary target of modern conflict.