The rapid expansion of artificial intelligence has moved beyond a mere software trend to become the primary catalyst for a total reconfiguration of the global data center economy. Amazon Web Services finds itself at the center of this transformation, shifting its role from a general-purpose cloud provider into an integrated utility that mirrors the historical impact of the electric grid. As global enterprises face a scramble for computational resources, the focus has shifted toward securing the entire lifecycle of AI development. This necessitates a move away from standard off-the-shelf components toward a bespoke ecosystem where every layer is fine-tuned for performance. By controlling the pipeline from raw power generation to the final inference engine, AWS is effectively building a new architectural standard for the digital age. This vertical integration is not just a competitive advantage but a fundamental survival strategy in a market defined by extreme scarcity and skyrocketing operational demands.

Scaling Capacity in an Era of Resource Scarcity

Infrastructure Expansion: Power and Physical Footprint

The most immediate hurdle in the current technological climate is the sheer magnitude of the physical resources required to sustain modern machine learning workloads. During the current year of 2026 and looking toward 2028, the push to expand energy capacity has become the defining characteristic of cloud competition. Last year, AWS added nearly four gigawatts of new power capacity to its network, and the roadmap for the next two years involves doubling the total energy footprint to keep pace with the insatiable hunger of generative models. This expansion is not merely about building more buildings; it involves negotiating complex power purchase agreements and investing in renewable energy sources that can provide the steady, high-density current necessary for massive GPU clusters. Without these foundational investments, the most advanced software architectures remain theoretical, as the physical limits of the existing power grid have become the ultimate bottleneck for innovation.

The complexity of these massive infrastructure projects has forced a reimagining of how data centers are designed and deployed across the globe. Modern facilities are no longer simple warehouses for servers but are high-tech power plants and cooling systems engineered to handle the intense thermal output of AI chips. This shift requires a level of capital expenditure that was previously unimaginable, with tens of billions of dollars dedicated to the physical layer of the cloud. Engineering teams are now focusing on liquid cooling and specialized rack designs that allow for higher compute density within a smaller physical footprint. As these facilities become more specialized, the barrier to entry for new competitors rises, solidifying the dominance of providers who can manage both the electrical engineering and the digital aspects of the cloud. This trend suggests that the future of the industry will be dictated by those who can most effectively bridge the gap between heavy industrial power and micro-scale semiconductor performance.

Strategic Dependency: Securing Long-Term Access

A significant shift in market psychology has occurred as major enterprises move from sporadic experimentation to the full-scale deployment of large-scale models. Companies are no longer content with purchasing cloud resources on a pay-as-you-go basis; instead, they are attempting to lock up vast amounts of capacity years in advance. This behavior represents a strategic dependency where access to compute is viewed as a vital commodity, similar to oil or specialized minerals. There have been instances where large-scale clients attempted to reserve the entire output of specific server lines for several years, seeking to ensure that their competitors are effectively locked out of the market. This desperation highlights a fundamental change in the relationship between cloud providers and their customers, where the provider acts as a gatekeeper to the essential resources required for business survival in an increasingly automated economy.

This scarcity creates a unique challenge for AWS, as the company must balance the demands of its largest partners with the need to maintain a fair and accessible ecosystem for the broader market. If capacity becomes too concentrated in the hands of a few tech giants, the resulting lack of availability could drive smaller innovators toward competing platforms. To mitigate this risk, there is a growing emphasis on promoting specialized silicon like the Graviton series, which offers a more efficient alternative to traditional x86 processors. By shifting general-purpose workloads to these custom Arm-based chips, the company can free up specialized GPU resources for high-priority AI tasks. This strategy not only optimizes the existing hardware fleet but also encourages customers to optimize their own codebases for better efficiency. Consequently, the ability to manage this delicate supply-and-demand balance has become just as important as the underlying technology itself.

Innovating Through Custom Semiconductor Architectures

Trainium and InferentiRedefining Chip Economics

While the industry continues to rely heavily on external semiconductor giants for high-end GPUs, the push toward proprietary silicon has reached a critical tipping point. AWS has aggressively expanded its internal chip division to produce the Trainium and Inferentia series, which are designed specifically to handle the unique mathematical demands of neural network training and deployment. The release of Trainium3 has provided a significant leap in performance, offering up to forty percent better efficiency than the previous generation. This hardware is not intended to replace the existing partnership with companies like Nvidia but rather to offer a high-performance alternative that wins on the basis of price-performance economics. For enterprises running models with trillions of parameters, the cost of training can be prohibitive, making the efficiency gains of custom-built hardware a primary driver for platform loyalty.

The roadmap for these custom chips is accelerating rapidly, with the upcoming Trainium4 already seeing massive interest and early reservations from forward-thinking tech firms. This preemptive demand suggests that the market is ready to embrace a diverse hardware landscape where different chips are utilized for different stages of the AI lifecycle. For instance, while one type of hardware might be preferred for the initial training of a foundation model, another might be far more cost-effective for the inference phase where the model responds to user queries. By providing a menu of silicon options, AWS allows developers to optimize their operational expenses based on the specific needs of their application. This level of granular control over the hardware layer enables a much tighter integration between the silicon and the software frameworks, resulting in a more responsive and reliable user experience for the end customer.

Full-Stack Optimization: Hardware Meets Software

The true power of custom silicon is realized when it is paired with software frameworks that are engineered to exploit its specific architectural features. AWS has invested heavily in optimizing popular libraries like PyTorch and JAX to ensure they run seamlessly on Trainium and Inferentia hardware. This optimization process involves deep-level adjustments to how data is moved between memory and the processor, as well as how different chips communicate across high-speed interconnects. When these components are perfectly synchronized, the result is superior “token economics,” meaning the cost and time required to generate each word or image are significantly reduced. This is particularly vital for organizations that are deploying agentic workflows that require thousands of individual model calls to complete a single complex task.

Furthermore, this vertical integration allows for unique hardware-software synergies that are difficult to achieve in a fragmented ecosystem. For example, by controlling the interconnect technology that links thousands of chips together, AWS can minimize the latency that often plagues large-scale distributed training. This leads to a more stable training environment where models can be developed faster and with fewer interruptions. Major AI research firms and large-scale enterprises like Uber have begun to leverage these integrated stacks to scale their operations while keeping their cloud budgets under control. As the complexity of models continues to grow, the ability to fine-tune the entire stack from the compiler down to the transistor will be the primary factor that distinguishes the leading platforms from those that merely rent out third-party hardware.

Reforming Software Delivery with Agentic Tools

The Mantle Project: AI-Assisted Architecture

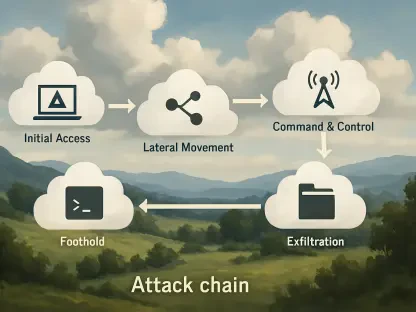

One of the most compelling examples of how the cloud is evolving is the internal use of AI to rebuild the very systems that host these models. The development of the Mantle inference engine stands as a testament to the power of agentic coding tools in modern software engineering. When the existing infrastructure for Amazon Bedrock reached its limits, a small team utilized internal AI assistants to rewrite the entire engine from scratch. This project, which would traditionally have required dozens of engineers and several quarters of development, was completed in just over two months by a handful of people. This phenomenon, often referred to as “productivity compression,” demonstrates that AI is not just an end product but a fundamental tool for improving the speed and quality of infrastructure development itself.

The success of the Mantle project led to immediate performance gains, enabling the platform to process a record number of tokens in the first half of 2026. This rapid iteration cycle suggests that the future of cloud software will be characterized by a continuous loop of self-improvement driven by automated tools. For the broader market, this serves as a case study in the “build-vs-buy” debate, showing that even the most complex systems can be overhauled quickly if the right AI tools are in place. As these agentic coding services become more sophisticated, they will likely be offered to external customers, allowing any organization to achieve similar levels of engineering efficiency. This shift changes the calculus for digital transformation, as the bottleneck moves from human labor capacity to the availability of high-quality training data and the compute power required to run the agents.

The Integrated AI Stack: A New Competitive Standard

AWS is currently solidifying its position as a holistic utility by managing four distinct layers of the technological stack: physical energy, custom silicon, the platform layer, and the application software. This four-tier approach provides a level of synergy that makes it difficult for competitors to offer a comparable value proposition. By providing “point-and-click” access to a variety of foundation models through services like Bedrock, the company simplifies the deployment process for businesses that do not have the expertise to manage raw infrastructure. This abstraction layer is crucial for the mass adoption of AI, as it allows developers to focus on building features rather than worrying about the underlying hardware configuration. The result is a seamless environment where a startup can go from an idea to a global deployment in a fraction of the time it previously took.

In addition to its internal developments, the company is fostering a diverse ecosystem through strategic partnerships with specialized hardware firms. These collaborations allow for hybrid workflows where different parts of the AI inference process are handled by the hardware best suited for the task. For example, some stages of processing might occur on Trainium chips while others are offloaded to specialized high-speed processors for maximum throughput. This modular approach ensures that the platform remains flexible enough to adapt to new research breakthroughs and changing market demands. By acting as a central hub that integrates various technologies into a single, cohesive service, AWS maintains its status as the preferred destination for AI innovation. This comprehensive strategy ensures that the company is not just a participant in the AI revolution but is the very foundation upon which the next generation of digital services is being constructed.

Future Considerations for Infrastructure Management

The transformation of the global cloud landscape into a specialized AI utility necessitated a total reevaluation of how technology leaders approached their infrastructure investments. Decision-makers learned that relying on a single hardware provider was no longer a sustainable strategy in an environment defined by extreme scarcity. Instead, the focus shifted toward building multi-layered architectures that could leverage custom silicon to achieve better economic outcomes. Organizations that successfully transitioned to these integrated stacks saw significant reductions in their operational costs, allowing them to reinvest those savings into further research and development. The move toward vertically integrated platforms provided a blueprint for how to scale complex digital services while maintaining the agility required to respond to rapid market changes.

Looking forward, the success of any AI-driven enterprise will likely depend on its ability to navigate the complexities of this new integrated utility model. It is recommended that companies prioritize the optimization of their software for proprietary hardware architectures to take full advantage of the price-performance benefits available. Furthermore, investing in internal AI-assisted development tools will be essential for maintaining a competitive edge in an era where software lifecycles have shortened from years to months. As the physical and digital layers of the cloud continue to merge, the organizations that thrive will be those that view their infrastructure not as a static cost center but as a dynamic, self-optimizing system. The lessons learned during this period of intense growth served as a reminder that the true value of technology lies in its ability to adapt to the shifting needs of the global economy.