The physical reality of modern computing has finally collided with the uncompromising limits of copper wiring, creating a bottleneck that threatens to stall the progress of artificial intelligence. As Large Language Models and massive neural networks scale toward 1.6T speeds, the electrical traces on traditional circuit boards have become too lossy and power-hungry to keep pace. This performance “starvation” occurs when high-priced GPUs sit idle, waiting for data that simply cannot travel fast enough across metal pins. Near-Packaged Optical (NPO) interconnects represent a significant advancement in the data center and high-performance computing (HPC) industry, offering a way to bypass these physical barriers by integrating light-based communication closer to the processing heart.

This review explores the evolution of the technology, its key features, performance metrics, and the impact it has had on various applications. Specifically, it examines how NPO serves as a critical bridge between legacy pluggable optics and nascent co-packaged solutions, offering a pragmatic path forward for hyperscale environments. By shifting the conversion from electrons to photons away from the edge of the board and into the immediate vicinity of the processor, NPO provides a substantial leap in bandwidth density. The purpose of this review is to provide a thorough understanding of the technology, its current capabilities, and its potential future development. Through an analysis of the strategic alliance between LightSpeed Photonics and Infraeo, this article highlights the industrial shift toward scalable, low-latency optical architectures.

The Evolution of Near-Packaged Optical Interconnects

Near-Packaged Optics emerged as a response to the “interconnect bottleneck” currently facing artificial intelligence and high-performance computing. At its core, NPO involves placing optical engines on a high-performance substrate in close proximity to the host ASIC (Application-Specific Integrated Circuit) or GPU, rather than integrating them directly into the silicon package or placing them at the edge of the board in traditional pluggable modules. This spatial optimization is not merely a matter of convenience; it is a calculated effort to shorten the electrical path, thereby reducing signal degradation and the immense power required to drive data through copper.

This technology evolved to address the signal integrity issues and power inefficiencies inherent in long-trace electrical signaling. By bringing the optical conversion closer to the compute engine, NPO reduces the distance data must travel over lossy copper traces. In the broader technological landscape, NPO is positioned as a “Goldilocks” solution—providing the power benefits of advanced integration while avoiding the extreme thermal and manufacturing complexities associated with Co-Packaged Optics (CPO). It addresses the immediate needs of 2026-era data centers that require the density of integrated photonics without the risk of losing an entire multi-thousand-dollar GPU if a single laser fails.

Core Architectural Pillars and Performance Drivers

Solderable Near-Packaged Optical Engines

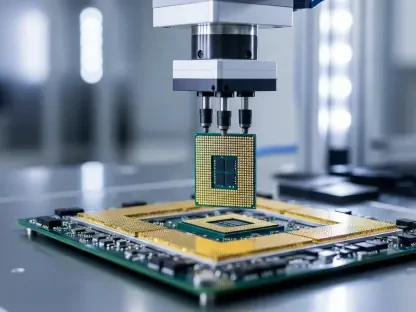

One of the primary features of NPO is the development of solderable optical engines that sit adjacent to the processor. Unlike traditional pluggable transceivers that rely on mechanical cages and connectors at the faceplate, NPO modules are mounted directly onto the PCB or a dedicated substrate. This proximity significantly reduces the power required to drive high-speed signals from the ASIC. By eliminating the need for heavy-duty retimers and long-reach electrical drivers, these engines allow for a more streamlined power profile, which is essential as thermal envelopes for AI servers continue to expand.

Furthermore, this component-level approach allows for better signal integrity and higher bandwidth density, enabling clusters to scale without the “starvation” caused by slow data movement. Solderability also implies a shift toward surface-mount technology (SMT) compatibility, allowing these optical components to be handled like any other high-speed semiconductor during the assembly process. This transition from “pluggable hardware” to “on-board components” marks a fundamental change in how system architects view connectivity, treating the optical link as a local high-speed bus rather than an external network connection.

PCIe-over-Fiber: Resource Disaggregation

Another critical component of the modern optical landscape is the extension of the Peripheral Component Interconnect Express (PCIe) interface over optical fiber. By utilizing optical links for PCIe, data centers can overcome the distance limitations of electrical wiring, which typically restrict high-speed links to just a few inches. This enables “composable” architectures where compute, memory, and storage resources can be decoupled and pooled across different racks. Instead of every server being a fixed box of components, the data center becomes a fluid pool of resources connected by a high-speed optical fabric.

This feature is vital for managing “east-west” traffic in AI clusters, as it reduces tail latency and mitigates incast congestion, allowing for more flexible and efficient hardware utilization. When a massive training job requires more memory than a single node can provide, PCIe-over-fiber allows it to tap into a remote memory tray with the same latency it would expect from an internal slot. This architectural shift is instrumental in maximizing the return on investment for hyperscalers, ensuring that every cycle of a GPU is utilized regardless of where the data resides.

Current Trends and Strategic Industry Shifts

The most notable development in the field is the shift toward regional manufacturing ecosystems and strategic alliances, such as the partnership between LightSpeed Photonics and Infraeo. There is a growing trend to establish robust supply chains that span across the United States, Singapore, and India to ensure the scalability of 1.6T interconnects. This geopolitical diversification is a direct response to the fragility of traditional single-source supply chains, ensuring that the critical components for AI infrastructure can be produced and deployed with high resilience.

Industry behavior is also shifting toward modularity. While Co-Packaged Optics remains a long-term goal, the industry is currently favoring NPO and Linear Pluggable Optics (LPO) because they offer a better balance of serviceability and performance. The ability to replace a faulty optical module without discarding an expensive GPU or switch ASIC is influencing current hardware roadmaps, making NPO the preferred intermediate standard for hyperscalers. This pragmatism suggests that the market values operational uptime and maintenance simplicity as much as it values raw bandwidth increases.

Real-World Applications in AI and Hyperscale Infrastructure

The primary deployment of NPO technology is found within hyperscale data centers and AI training clusters. In these environments, the technology is used to connect thousands of GPUs, ensuring that high-powered compute engines remain fully utilized. For instance, the LightSpeed-Infraeo collaboration focuses on validating NPO in real-world pilot deployments to prove power savings and latency reduction in live AI workloads. These deployments demonstrate that the theoretical benefits of optical interconnects translate into tangible reductions in the “time-to-train” for complex foundation models.

Beyond standard data processing, NPO and PCIe-over-fiber are being implemented in disaggregated memory systems. This allows large-scale AI models, which require massive amounts of RAM, to access memory pools located in separate chassis with the low latency of a local bus. These implementations are crucial for the next generation of Large Language Models (LLMs) that exceed the memory capacity of a single server node. By decoupling the memory from the processor, operators can scale their storage and compute independently, leading to more efficient energy use and lower capital expenditures.

Technical Hurdles and Market Obstacles

Despite its advantages, NPO faces significant challenges. Technical hurdles include managing the precision required for high-density optical coupling and ensuring long-term reliability in the high-temperature environments near high-power processors. While NPO is easier to manage thermally than CPO, it still requires sophisticated cooling solutions, such as liquid cooling or advanced heat spreaders, to maintain performance. The mechanical stress of soldering optical components to a board that undergoes significant thermal cycling also remains a point of intense engineering focus.

From a market perspective, the adoption of NPO is challenged by the dominance of established hardware titans like Nvidia and Broadcom, who often promote proprietary integrated stacks. Additionally, the rapid pace of innovation means that NPO must reach cost-optimized industrial-scale production quickly before competing technologies, such as chip-to-chip optical I/O, become mature enough to displace it. The industry must standardize these “near-packaged” interfaces rapidly to prevent a fragmented market that could delay the broad adoption of 1.6T and 3.2T standards across different vendor ecosystems.

Future Outlook and Technological Trajectory

The future of near-packaged optical interconnects is headed toward even higher levels of integration and standardization. As 1.6T and 3.2T speeds become the norm, the industry will likely see a convergence of NPO with silicon photonics, leading to even smaller and more energy-efficient modules. The integration of laser sources directly into the NPO substrate, or the use of external laser pools to mitigate heat, will define the next generation of these systems. This trajectory suggests that the line between “networking” and “computing” will continue to blur until the entire data center fabric acts as a single, coherent machine.

The long-term impact of this technology will be the realization of the “optical data center,” where almost all high-speed communication occurs over fiber. This shift will likely enable the continued growth of AI capabilities by removing the physical barriers to scaling, potentially leading to breakthroughs in how distributed computing systems are designed and operated on a global scale. As we move from 2026 toward even more demanding computational eras, the reliance on photons over electrons will likely transition from a specialized performance boost to a universal architectural requirement for all high-end silicon.

Summary and Assessment of Optical Interconnects

In summary, Near-Packaged Optical interconnects represented a vital evolutionary step in high-speed data communication. By placing optics closer to the silicon, NPO addressed the critical power and bandwidth limitations of traditional copper while maintaining the serviceability required by modern data centers. The current state of the technology was one of rapid transition, fueled by strategic global partnerships and the urgent needs of the AI era. While technical and competitive challenges remained, the potential for NPO to serve as the backbone of next-generation AI infrastructure stayed high.

Moving forward, the focus must shift toward creating a unified standard for NPO modules to ensure interoperability between different ASIC and photonics vendors. Organizations should prioritize the development of automated alignment and assembly processes to bring down the cost of these modules to match the price-per-bit of traditional pluggables. Furthermore, the industry must explore hybrid cooling strategies that can handle the concentrated heat of both the processor and the adjacent optical engines. Ultimately, the success of NPO was defined by its ability to provide a scalable, cost-effective, and reliable bridge to the future of fully optical computing, ensuring that the infrastructure could handle the insatiable data demands of evolving artificial intelligence.