The monolithic silhouette of the modern data center has transformed from a mere warehouse of servers into a living, breathing computational engine that consumes gigawatts of power to fuel the global intelligence economy. In this environment, the traditional approach of piecing together disparate components has become a relic of a slower era. Today, the focus has shifted toward a unified, full-stack philosophy where the entire rack serves as the fundamental unit of compute. The introduction of the Vera Rubin platform signifies a pivotal moment in this evolution, moving the industry closer to a world where hardware and software are inseparable components of a singular, massive appliance.

As organizations strive to keep pace with the exponential growth of generative models and agentic workflows, the complexity of managing individual silicon units has reached a breaking point. The Rubin architecture addresses this by treating the data center as a unified “AI Factory.” This is not just a marketing catchphrase but a technical reality where compute, high-speed networking, and power management are engineered to function in perfect synchronization. For the enterprise, this means moving away from the era of “chip-only” performance metrics and toward a more holistic understanding of system-level efficiency and throughput.

The Transition from Discrete Components to Unified AI Factories

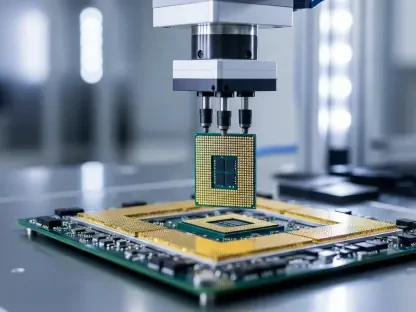

The data center landscape has underwent a radical restructuring, shifting away from the optimization of individual server blades toward the management of cohesive, rack-scale environments. In previous cycles, IT departments focused on the specific clock speeds of processors or the capacity of localized memory. However, the sheer scale of current artificial intelligence workloads has made this granular approach obsolete. Modern infrastructure requires a design where thousands of units act as a single logical processor, demanding a level of integration that covers everything from physical cooling to software orchestration.

This shift defines the “AI Factory,” a vision where the infrastructure operates as an integrated appliance rather than a collection of parts. When compute, networking, and power management are designed in silos, the resulting inefficiencies create massive bottlenecks that hinder the training of large-scale models. By unifying these layers, the platform ensures that data flows are optimized at every turn. This allows enterprises to deploy massive computational power with the same ease that one might install a single server, transforming the data center into a high-output production line for digital intelligence.

The Architecture of Power: Deconstructing the Vera Rubin Ecosystem

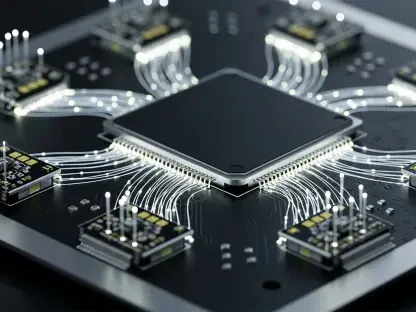

At the heart of this new ecosystem lies the synergy between the next-generation Vera CPU and the Rubin GPU. These core processing engines are not merely faster than their predecessors; they are architecturally intertwined to handle the data-heavy demands of trillion-parameter models. The Vera CPU takes on the role of a sophisticated conductor, managing the complex logic and data preparation tasks that allow the Rubin GPU to remain focused on raw mathematical throughput. This coordination reduces the “starvation” of processing cores, ensuring that every cycle is dedicated to meaningful computation.

To support this massive processing power, the platform utilizes high-speed fabrics like NVLink 6 and the Spectrum-6 Ethernet switch. These interconnects function as the nervous system of the rack, eliminating traditional bottlenecks that previously throttled data transfer between nodes. Furthermore, the inclusion of BlueField-4 DPUs and ConnectX-9 SuperNICs offloads critical tasks such as security, storage management, and network telemetry from the primary processors. This offloading strategy maximizes overall system efficiency, allowing the primary engines to operate at peak capacity without being bogged down by background administrative overhead.

Networking as the New Performance Frontier

In the current landscape, the primary performance bottleneck has shifted from raw GPU clock speeds to interconnect bandwidth. Even the most powerful processor becomes a liability if it must wait for data to arrive from a distant node. Consequently, networking has emerged as the strategic centerpiece of the modern AI data center. The ability to move petabytes of data across a cluster with minimal latency is now the defining characteristic of a high-performance environment, making the choice of fabric as important as the choice of silicon.

To achieve this, the industry has moved toward deterministic networking, where advanced Ethernet architectures rival the performance of specialized proprietary fabrics. Strategies such as programmable congestion control and adaptive routing are now essential for maintaining 100% GPU utilization in distributed environments. By ensuring that data packets take the most efficient path through the network, the platform minimizes “tail latency” and prevents the synchronization delays that often plague large-scale AI training runs.

Solving the Power and Density Crisis

The physical reality of AI scaling has brought the industry face-to-face with the 100kW rack challenge. As thermal and electrical demands reach unprecedented levels, traditional air-cooling and power distribution methods are no longer sufficient to sustain growth. Modern deployments require a radical rethinking of how energy is delivered and heat is removed. This crisis has forced a transition toward “chip-to-grid” strategies, where the infrastructure is designed to maximize capacity within the strict confines of fixed power constraints and environmental regulations.

Innovative solutions like the DSX platform have become vital for increasing infrastructure density without exceeding power limits. By integrating liquid cooling directly into the rack design and utilizing onsite power generation, organizations can mitigate the energy bottlenecks that threaten to stall expansion. This sustainable scaling approach not only reduces the carbon footprint of the data center but also lowers the total cost of ownership by improving the efficiency of energy conversion and thermal management.

Strategic Frameworks for Enterprise Adoption

Enterprises now face a critical decision regarding the efficiency-lock-in tradeoff. While proprietary, highly integrated platforms offer unmatched performance and ease of deployment, they also tether an organization to a specific vendor’s ecosystem. CIOs must determine when the gains in computational throughput outweigh the need for multi-vendor flexibility. For many, the hyperscale blueprint offers a middle ground, allowing organizations to leverage the massive Rubin-class infrastructure of providers like AWS, Azure, and Google Cloud without the capital expenditure of building their own facilities.

Future-proofing the data center now requires a new set of skills focused on AI-native system engineering and high-density operations. Teams must transition from traditional IT management to a model that emphasizes workload orchestration and “chip-to-grid” power optimization. As the technology continues to evolve, the ability to manage these complex, unified environments will become the primary differentiator for companies seeking to capitalize on the next wave of autonomous and agentic intelligence.

In the final assessment, the transition to the Rubin platform required a fundamental re-evaluation of how digital infrastructure was valued. Organizations that embraced the shift moved toward a model where hardware was no longer viewed as a depreciating asset but as a dynamic engine of innovation. These early adopters successfully integrated liquid cooling and deterministic networking into their core operations, effectively doubling their throughput within the same physical footprint. Leaders eventually recognized that the path to sustainable AI scaling lay in the mastery of full-stack integration rather than the pursuit of individual component milestones.