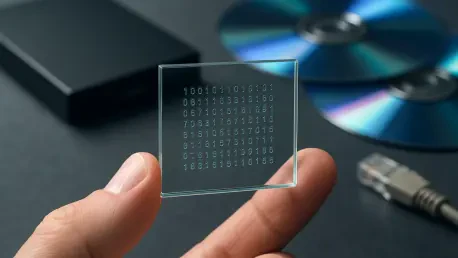

The global volume of digital information is currently expanding at a rate that far outpaces the physical capacity of our most advanced data centers, creating a silent crisis in archival sustainability. Traditional storage mediums like magnetic tape and hard disk drives are essentially “living” systems that require constant energy, climate control, and expensive hardware migrations every few years to prevent data loss. Optical glass storage, specifically the advancements seen in Project Silica, offers a departure from this cycle of obsolescence by utilizing the molecular stability of glass to house data for millennia. This technology shifts the focus from temporary, high-maintenance storage to a permanent, immutable infrastructure that could redefine how humanity preserves its digital legacy.

The Emergence of Glass-Based Storage Technology

The core principle of this technology relies on the interaction between ultra-short femtosecond laser pulses and a resilient borosilicate medium. Unlike traditional optical discs that store data on the surface, this method etches information deep within the bulk of the glass, creating a three-dimensional data map that is virtually indestructible. This approach addresses the “data deluge” by providing a dense, passive storage solution that does not degrade over time, offering a radical departure from the volatile nature of flash memory.

In the contemporary technological landscape, glass-based systems are positioned as the ultimate sustainable alternative to magnetic media. While data centers currently consume vast amounts of electricity to keep servers cool and tapes moving, glass storage is entirely passive once written. It requires no power to maintain the integrity of the information, making it a critical tool for organizations aiming to reach net-zero carbon goals while managing petabytes of historical records.

Core Technical Components and Data Encoding Mechanics

Femtosecond Laser Writing and Voxel Formation

The primary method of data entry involves a femtosecond laser that fires pulses at speeds measured in quadrillionths of a second. These pulses do not simply burn the glass; they induce a localized change in the refractive index, creating structures known as voxels. By manipulating the intensity and polarization of the laser, engineers can create “phase voxels,” which allow for multiple bits of data to be stored in the same physical space. This multi-dimensional encoding is what allows glass to achieve the density required for modern enterprise applications.

Material Evolution: From Fused Silica to Borosilicate Glass

The shift from expensive fused silica to common borosilicate glass represents a major leap in commercial viability. Borosilicate glass, famous for its thermal resistance in laboratory equipment and kitchenware, provides a cost-effective medium that can withstand extreme environmental stressors. Its ability to remain stable under high temperatures and its resistance to chemical erosion ensure that the data remains readable even if the storage facility faces catastrophic conditions like fires or floods.

Recent Innovations in Density and Ingestion Speed

Recent breakthroughs have moved beyond the experimental phase, successfully demonstrating the ability to stack hundreds of distinct data layers within a sheet of glass no thicker than a standard window pane. This vertical scaling is enhanced by birefringent voxel writing, a technique that uses the orientation of the glass molecules to store even more information per point. These innovations mean a single small glass square can now compete with the capacity of a high-end mechanical hard drive, but without the mechanical failure points.

To solve the historical problem of slow data ingestion, researchers have implemented parallel writing systems. Instead of a single laser beam laboriously etching one voxel at a time, multi-beam arrays can now write multiple data streams simultaneously. This advancement is crucial for enterprise adoption, as it narrows the performance gap between glass and traditional archival tapes, making it feasible to ingest massive datasets from cloud providers in a reasonable timeframe.

Real-World Applications and Archival Use Cases

The media and entertainment industry has become the primary testing ground for this technology, exemplified by high-profile projects that have archived iconic cinematic works for centuries. For studios, the “Superman” project was not just a stunt; it was a solution to the ongoing cost of migrating digital masters every five years. By moving high-value assets to glass, these companies eliminate the recurring capital expenditure associated with refreshing aging tape libraries.

Beyond Hollywood, government agencies and scientific institutions are looking at glass for “cold” storage where immutability is a legal or operational requirement. In these scenarios, data must remain accessible for hundreds of years without the risk of “bit rot” or the need for specialized legacy hardware. Glass provides a “write once, read forever” architecture that is uniquely suited for national archives and genomic data banks that require permanent preservation.

Implementation Hurdles and Market Obstacles

Despite its clear benefits, the transition to glass storage faces significant headwinds, primarily due to the high initial cost of the proprietary laser writing systems. While the glass itself is inexpensive, the precision optics and high-speed reading hardware required to retrieve the data are not yet mass-produced. This creates a high barrier to entry for smaller organizations, currently limiting the technology to hyperscale cloud providers and massive institutional archives.

Standardization remains another hurdle, as there are currently no industry-wide metrics for retrieval latency or sustained write speeds in glass media. Unlike the well-established LTO tape standards, glass storage is still operating in a fragmented ecosystem. For it to become a true enterprise staple, the industry must develop unified protocols that allow different hardware systems to read the same glass sheets, ensuring that the data is truly portable across centuries.

Future Outlook and the Impact of the AI Era

As Artificial Intelligence continues to evolve, the demand for massive, reliable datasets for model training will only grow. Glass storage is uniquely positioned to act as the long-term memory for AI, housing the colossal amounts of historical data needed to refine future algorithms. By providing a low-energy, high-density repository, glass technology could mitigate the environmental impact of the massive data centers that power modern machine learning.

Future developments are likely to focus on the democratization of reading hardware, perhaps through the use of high-resolution sensors and more efficient microscopic imaging techniques. If the hardware becomes portable or more affordable, the technology could move from the deep archives of cloud giants into specialized edge computing environments. This shift would fundamentally change the digital economy by making permanent data preservation a standard feature rather than a luxury.

Summary of the Technological Shift in Data Preservation

The development of Project Silica marked a transition from a visionary laboratory concept to a pragmatic solution for the world’s most pressing data storage challenges. By replacing fragile magnetic particles with stable molecular changes in borosilicate glass, the industry moved toward a future where data loss due to hardware degradation became a solved problem. This evolution suggested that the environmental and economic costs of data preservation could be drastically lowered through material science.

The technological shift ultimately focused on the long-term viability of the digital ecosystem, prioritizing durability over the fleeting speed of traditional flash systems. As organizations began to integrate glass into their archival strategies, the emphasis moved toward building reading infrastructures that could remain functional for generations. This progress ensured that the vast wealth of information generated in the modern age would remain a readable resource for the future, rather than a lost digital dark age.