The architectural foundation of global computing is undergoing a seismic realignment as the industry pivots away from general-purpose processors toward highly specialized, custom-designed silicon. This transition represents more than a technical upgrade; it is a fundamental shift in how the world’s most advanced artificial intelligence systems are built and funded. As generative AI models grow in complexity, the reliance on standard chips has created bottlenecks in power efficiency and performance, forcing major tech players to design their own hardware paths to maintain a competitive edge.

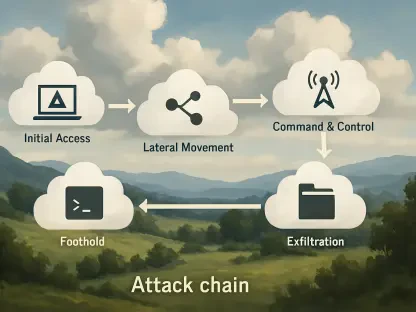

The current strategy among hyperscalers focuses on vertical integration, where hardware is precisely tuned to the specific algorithms it will execute. This trend has moved from an experimental phase into a multi-billion dollar requirement for any organization aiming to lead the next decade of digital innovation. Over the following sections, this analysis explores the market growth of custom accelerators, the massive infrastructure partnerships between leaders like Broadcom and Google, and the long-term implications for the global supply chain.

The Growth and Application of Specialized AI Hardware

Market Momentum: Adoption Statistics

Recent data shows a decisive shift toward Application-Specific Integrated Circuits (ASICs) as standard GPUs struggle to meet the unique demands of large-scale transformer models. While general chips provided the early spark for the AI boom, custom accelerators now account for a rapidly increasing percentage of data center footprints. This adoption is driven by the need for superior performance-per-watt metrics, which are essential as energy costs become a primary constraint on growth.

Capital expenditure trends among hyperscalers like Google and Amazon reflect this pivot, with billions of dollars being diverted from third-party hardware toward proprietary silicon development. Industry projections suggest that the compound annual growth rate for custom AI silicon will remain in the double digits through 2030. This volatility in investment signifies a broader move toward hardware autonomy, where companies seek to control their own technological destiny rather than remaining at the mercy of external vendor cycles.

Real-World Deployment: The Google and Anthropic Case Study

The legacy of Google’s Tensor Processing Units (TPUs) serves as a blueprint for this specialized future, demonstrating how custom silicon can power the world’s largest language models with unprecedented efficiency. By tailoring hardware to the specific mathematical operations required by neural networks, Google has maintained a distinct advantage in training speed and cost-effectiveness. This success has paved the way for massive collaborative efforts that merge custom chip design with extreme-scale infrastructure.

A primary example of this scale is the landmark agreement between Broadcom and Anthropic, which aims to deploy a staggering 3.5 gigawatts of compute capacity. To put this in perspective, this physical scale represents enough power to support entire cities, all dedicated to a single AI ecosystem. Furthermore, the integration of proprietary networking components ensures that thousands of these custom chips function as one cohesive supercomputer, eliminating the latency issues that often plague traditional server architectures.

Industry Perspectives: Vertical Integration and Resilience

Industry leaders emphasize that the move toward custom silicon is born of an “efficiency mandate” to overcome the physical thermal limits of traditional computing. As models expand, the heat generated by general-purpose hardware becomes unmanageable, making specialized designs the only viable path forward. Experts argue that without this level of hardware customization, the energy requirements of next-generation AI would eventually outpace the capacity of the modern power grid.

However, the strategy is not solely about performance; it is also about building a resilient and diverse infrastructure. CTOs at major AI firms advocate for a multi-platform approach that utilizes a mix of hardware, such as NVIDIA GPUs, Google TPUs, and AWS Trainium. This diversification prevents vendor lock-in and ensures that services remain operational even if one supply chain faces disruptions. Financial analysts note that the explosive revenue growth of companies like Anthropic provides the necessary capital to justify these unprecedented, long-term hardware commitments.

The Future of the AI Infrastructure Landscape

The evolution of the supply chain is currently being shaped by long-term agreements that stretch into the next decade, creating a more stable but highly consolidated ecosystem. These multi-year deals provide chip designers and fabricators with the predictable demand needed to invest in cutting-edge manufacturing processes. Consequently, the hardware landscape is becoming a field where only the most well-capitalized players can compete, potentially raising the barrier to entry for new startups.

Innovations in interconnect technology and rack-level liquid cooling will be the next frontier in supporting exponential workload growth. As the industry pushes against the boundaries of physics, the “trickle-down” effect will likely see custom silicon techniques move from massive data centers into edge computing and consumer devices. While this progress is rapid, the industry must still navigate significant risks, including energy availability and the complex geopolitics of semiconductor manufacturing, which could pose bottlenecks to future expansion.

Specialized hardware ceased to be a luxury and became a mandatory standard for any enterprise seeking to dominate the AI era. The industry observed a clear transition where performance efficiency and supply chain security were prioritized over the flexibility of general-purpose components. This movement toward custom silicon required a new level of collaboration between chip designers and AI researchers, ensuring that hardware and software evolved in perfect synchronization. The resulting infrastructure provided the necessary resilience to sustain the current pace of innovation, proving that the future of intelligence is inextricably linked to the physical silicon upon which it resides.