Matilda Bailey has spent her career navigating the intricate plumbing of the digital world, specializing in the networking protocols and infrastructure shifts that define how data moves across the globe. As a veteran specialist in cellular and next-gen wireless solutions, she has a front-row seat to the massive physical buildouts required to sustain the artificial intelligence gold rush. In this conversation, we explore the strategic implications of Mistral AI’s recent $830 million debt raise and what it means for a company to transition from a software-heavy model to a hardware-intensive infrastructure player in the heart of Europe.

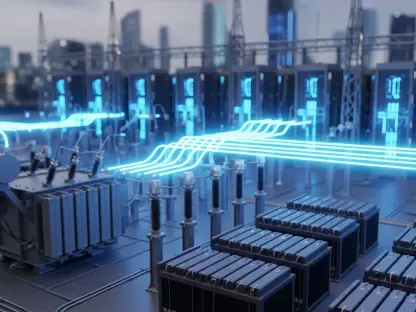

Building a massive data center near Paris with over 13,000 GPUs involves significant technical hurdles. What are the primary logistical challenges when scaling to a 200 MW capacity by 2027, and how does owning this hardware, rather than renting it, change an organization’s long-term operational strategy?

Moving from a lean, cloud-based startup to an infrastructure owner with 13,800 Nvidia GPUs is like trading a rental car for a fleet of specialized industrial machinery; the control is exhilarating, but the maintenance is relentless. The sheer heat density of those GPUs requires a level of cooling sophistication that few existing facilities can handle, making the 200 MW target for 2027 a massive engineering lift. By choosing to own the stack in Bruyères-le-Châtel, the organization shifts from a variable operational expense to a heavy capital burden that necessitates a constant, high-utilization rate to justify the investment. This ownership strategy allows for deep optimization of the networking fabric, but it also means they are tethered to the physical site, turning a software firm into a utility-scale operator that must manage power grids and physical security with the same precision as their code.

Raising over $800 million in debt to fund infrastructure is a high-stakes move for any technology firm. How does servicing such large-scale debt impact a company’s ability to pivot during market shifts, and what specific metrics indicate whether this capital structure is sustainable for an AI-focused business?

Taking on $830 million in debt from a consortium of seven banks, including Crédit Agricole and HSBC, creates a rigid financial gravity that most startups never have to navigate. This leverage means the company must hit its $1 billion annual recurring revenue target with near-perfect accuracy, as interest payments wait for no one, even if the market shifts toward a different model architecture. We have to watch the debt-to-equity ratio and the enterprise deal cycles very closely; if those cycles slow down or margins tighten due to competition, that financing transforms from a growth engine into a heavy anchor. Sustainability in this AI-focused structure is ultimately signaled by the “infrastructure operations muscle”—the ability to convert raw compute power into reliable, billable enterprise services without the overhead costs spiraling out of control.

Open-weight models are often marketed as a middle ground between closed systems and pure open source. How do these models specifically address European regulatory and data residency requirements, and what steps must a firm take to ensure these models provide enough customization to lure clients away from hyperscalers?

Open-weight models serve as a critical bridge for European industries that are increasingly wary of the “black box” nature of American hyperscalers. By providing the model weights, a company allows an enterprise to run the AI on its own localized infrastructure, ensuring that sensitive data never crosses the Atlantic or leaves a specific jurisdiction. To truly lure clients away from the convenience of AWS or Google, the firm must offer a level of “customized AI environment” depth that goes beyond simple fine-tuning, allowing for deep integration into proprietary datasets. This geographic control is a calculated move to become the “sovereign choice” for regulated industries like healthcare and finance, where regulatory exposure makes American cloud providers politically inconvenient.

There is a noticeable tension between seeking regional AI sovereignty and relying on American-made chips for raw compute power. In what ways can a company mitigate supply chain risks while pursuing geographic autonomy, and how might this dependency influence the development of indigenous hardware within the next decade?

It is a striking paradox to talk about European sovereignty while placing a massive order for thousands of high-end chips from a California-based giant like Nvidia. To mitigate the risk of being at the mercy of a single US-centric supply chain, a company has to diversify its technical partnerships and perhaps even look toward the burgeoning field of energy-aware AI or alternative architectures. This dependency acts as a loud wake-up call for the European Union; seeing billions of euros in debt being raised specifically to purchase American silicon will likely accelerate the push for indigenous hardware development over the next decade. For now, the focus is on owning the “sovereign AI infrastructure layer”—the data centers and the operational stack—while the continent waits for its own chip-making capabilities to catch up to the raw compute demands of generative models.

High-profile AI startups in Europe are securing billions in funding, yet they remain small compared to US-based giants. What are the practical advantages of being a leaner, geography-focused player in this space, and how do localized data center buildouts influence the way governments and regulated industries choose their partners?

While a $2.9 billion total funding pool sounds modest compared to OpenAI’s $180 billion war chest, being a leaner player allows for an agility that the giants often lack, particularly regarding local policy alignment. Governments and research institutions aren’t just looking for the biggest model; they are looking for partners who understand the specific nuances of European data residency and who are willing to build physical sites like the one coming online in the second quarter of 2026. This localized buildout acts as a physical guarantee of compliance, making these firms the preferred partners for public sector projects that demand high levels of oversight. By focusing on the sovereign narrative as a “defensible wedge,” a smaller player can dominate a regional market by being the only provider that truly speaks the language of the local regulator and the local power grid.

What is your forecast for sovereign AI infrastructure in Europe?

I expect we will see a rapid transition where sovereign AI infrastructure becomes the standard requirement for all public sector and high-security enterprise work across the continent. Over the next three to five years, the “utility-scale” data center buildout will accelerate, moving beyond experimental capacity to a point where Europe owns the full operational stack of its digital intelligence. We are moving toward a fractured but more secure global AI landscape where “geography and control” matter just as much as “raw compute power,” and the companies that own the physical facilities in places like Paris or Berlin will be the ones holding the keys to the kingdom. If these firms can successfully service their debt while hitting their capacity targets, the era of absolute dominance by American cloud hyperscalers in Europe will likely begin to fade.