The massive expansion of generative artificial intelligence is forcing a radical rethinking of how global data centers and telecommunications backbones handle the unprecedented surge in data traffic. As hyperscalers and cloud providers accelerate the construction of massive AI clusters, the traditional methods of optical networking are hitting physical and environmental limits that could stall progress. Ciena has responded to this challenge by unveiling a comprehensive suite of innovations designed to support these massive connectivity requirements through the end of the decade. The focus has shifted from merely increasing bandwidth to creating a holistic ecosystem where hardware density and energy efficiency are prioritized. By addressing the bottlenecks in fiber management and power consumption, the industry is moving toward a model where optical transport can keep pace with the exponential growth of GPU-driven workloads. This evolution is necessary because current infrastructures were never originally designed to handle the persistent, high-density traffic patterns that modern AI training models now demand on a daily basis.

The Innovation of Hyper-Rail: Scaling Density and Efficiency

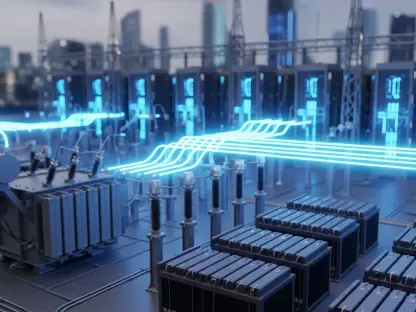

Central to this strategy is the introduction of a hyper-rail photonics architecture, a breakthrough that significantly transforms the physical layout of modern data center interconnects. This technology addresses the critical shortage of space and cooling capacity by increasing fiber density thirty-two-fold compared to standard configurations currently in operation. By packing up to 128 fiber pairs within a single rack, the architecture effectively slashes power consumption by 75% while simultaneously reducing the physical footprint by a staggering 85%. Such efficiencies are no longer optional for operators who are struggling to scale their operations within the constraints of existing real estate and power grids. This density allows for more direct, high-capacity links between AI compute nodes, which minimizes latency and ensures that data flows as smoothly as possible across the fabric of the network. Furthermore, the integration of these high-density systems simplifies the complex cabling environments that often lead to operational errors and maintenance delays in large-scale deployments.

Beyond the physical infrastructure, the drive toward 1.6 Tb/s coherent pluggable optics represents a significant leap in transmission capacity made possible by advanced 2nm silicon design. These next-generation components are engineered to provide the high-speed throughput required for synchronization between geographically distributed data centers without sacrificing thermal efficiency. As the industry transitions from 800G to 1.6T standards, the focus remains on maintaining signal integrity over longer distances while lowering the cost per bit. These pluggable modules offer the flexibility that service providers need to upgrade their existing hardware incrementally, allowing for a smoother transition to higher capacities as demand dictates. This technical progression is mirrored by broader industry movements, where competitors like Cisco are also seeing a massive uptick in infrastructure orders. The surge in demand for these high-capacity solutions highlights a collective shift toward a standardized, high-performance optical layer that can sustain the rigors of the global AI boom through 2028 and beyond.

A sophisticated software layer is equally vital to managing these hardware advancements, leading to the rise of Agentic AI within network management platforms. These autonomous systems utilize intelligent agents capable of making real-time decisions regarding routing, optimization, and fault detection without human intervention. By incorporating digital twin validation, operators can test network changes in a virtual environment before deployment, significantly de-risking the process of scaling up operations. This shift toward self-managing networks is becoming a standard requirement for hyperscalers who must manage thousands of nodes simultaneously across diverse environments. These agents do not merely follow static scripts; they analyze massive datasets to predict potential bottlenecks and adjust capacity dynamically. This level of automation is essential for maintaining the uptime required for continuous AI training and inference tasks. Integrating these intelligent software agents allows for a more resilient architecture that can recover from failures nearly instantaneously, ensuring that the critical data pipelines remain open and efficient.

The convergence of high-capacity 1.6 Tb/s hardware and autonomous management software established a new benchmark for the telecommunications industry. Organizations that adopted these dense, self-healing architectures positioned themselves to thrive in a landscape where data demands grew exponentially. Moving forward, the emphasis shifted from basic connectivity to sustainable performance, necessitating a focus on renewable energy integration and even more compact hardware designs. The strategic implementation of these technologies provided a clear roadmap for scaling networks while controlling operational costs and environmental impact. For decision-makers, the priority became the rapid modernization of legacy infrastructure to prevent connectivity bottlenecks from hindering AI development. As clusters continued to grow in size and complexity, the pivot toward energy-efficient, automated solutions proved to be the only viable path for long-term growth. The industry successfully demonstrated that by combining advanced material science with intelligent automation, it could meet the challenges of a data-driven world while maintaining the highest levels of reliability and speed.