The Dawn of the Infrastructure Era in Artificial Intelligence

The silent hum within the world’s most advanced data centers has officially shifted from a generic processing drone to a specialized roar of custom-engineered silicon designed specifically for the intelligence age. The global landscape of artificial intelligence is currently undergoing a massive transformation, shifting from a dispersed experimental phase to a highly centralized era of infrastructure dominance. At the heart of this evolution is the concentration of “compute”—the raw processing power required to train and run complex AI models. As the industry matures, the ability to command vast hardware resources has become the primary differentiator between market leaders and followers.

Recent analysis from research institutions like Epoch AI and Synergy Research Group has confirmed a pivotal milestone: Google has emerged as the world’s largest single owner of AI compute capacity. This shift signals a departure from the days when software innovation alone could disrupt the market. Today, the physical layer of the technology stack dictates the pace of progress. By examining the transition from third-party hardware to custom silicon and the move from on-premises data centers to the cloud, it becomes clear how strategic maneuvers in hardware engineering have placed Google at the summit of the global AI arms race.

From Experimental Labs to Hyperscale Dominance

To grasp the significance of the current lead in compute, one must look at the foundational shifts in the industry over the last few years. Historically, AI development was a software-centric pursuit where hardware was often treated as a secondary commodity. However, the rise of massive Large Language Models (LLMs) changed the mathematical requirements for success. The industry moved rapidly from needing standard server racks to requiring colossal clusters of specialized processors capable of performing trillions of calculations per second without thermal failure.

This background is essential because it explains why the barrier to entry in AI has become so incredibly high. In the past, a startup could innovate with modest hardware resources; today, “frontier” models require billions of dollars in infrastructure investment before a single line of code is executed. Foresight in recognizing this trend early allowed for the construction of a foundational landscape that is now paying significant dividends. These historical investments in physical infrastructure—data centers, advanced cooling systems, and transcontinental undersea cables—matter because they provide the necessary “shelf space” for the millions of chips that now power the global digital economy.

The Architecture of Power: Silicon Autonomy and Market Reach

The Proprietary Advantage: Tensor Processing Units

A critical aspect of current market dominance is the successful decoupling from the external hardware ecosystem. While much of the tech industry remains engaged in a frantic scramble to secure third-party GPUs, internal hardware refinement has created a massive buffer against supply chain volatility. Recent data suggests a holding of approximately 5 million “#00-equivalent” units of compute. Most notably, 4 million of these units are derived from proprietary Tensor Processing Units (TPUs), meaning reliance on outside vendors is significantly lower than that of major competitors.

This internal development provides a unique level of strategic autonomy. By building custom silicon, a company avoids the high premiums associated with third-party vendors and ensures that its hardware is perfectly optimized for specific software stacks, such as the Gemini family of models. This vertical integration is a massive benefit; while competitors are subject to external supply chain constraints and fluctuating pricing, an integrated provider can scale infrastructure according to its own roadmap. This allows for the offering of AI services at price points that would be prohibitively expensive for those renting hardware or buying it at retail margins.

The Centralization: Global Data Center Ownership

Building on the hardware advantage is the physical reality of where this compute capacity resides. Data indicates that hyperscale operators now control nearly half of all worldwide data center capacity. This is a radical departure from the period leading up to 2026, when a larger portion of capacity resided in on-premises enterprise facilities. The trend toward cloud-based centralization is accelerating, with hyperscalers expected to own two-thirds of global capacity within the next five years.

This shift presents both massive opportunities and significant geopolitical risks. Becoming the “landlord” of the AI era provides incredible market leverage, but the concentration of infrastructure has raised concerns regarding market control and the concept of “Sovereign AI.” Nations are now seeking to build localized AI stacks to maintain data sovereignty, fearing that total dependence on a few providers could compromise national security or economic independence. This emerging trend of AI nationalism is a direct response to the massive gravitational pull exerted by the largest infrastructure owners.

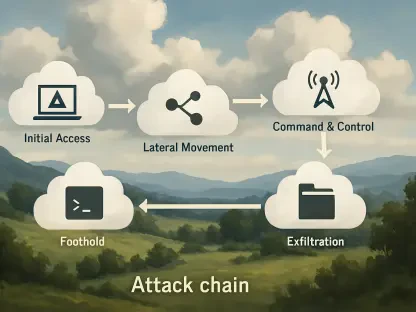

The Evolution: From Model Training to Inference

As the industry moves past initial hype cycles, a new complexity is emerging in the form of a shift from “training” to “inference.” While training a model requires the massive parallel processing power where certain vendors excel, “inference”—the act of a user actually interacting with an AI—requires a different set of efficiencies related to latency and power consumption. This is where the landscape could shift again, as inference workloads can be handled by a wider variety of specialized chips.

Diversified hardware portfolios are specifically designed to address these often-overlooked aspects of the AI lifecycle. Many common misunderstandings about the AI race assume that whoever wins the training phase wins the entire market. In reality, the long-term winner will likely be the entity that manages inference at the lowest cost and highest speed for billions of end-users. Custom accelerators are increasingly optimized for these high-volume, low-latency tasks, providing a path toward sustainable profitability that raw training power alone cannot guarantee.

Anticipating the Future of AI Compute

Looking ahead, several emerging trends will shape the next decade of digital intelligence. A significant move toward “edge compute” is expected, where smaller, more efficient versions of models run on local devices rather than in massive centralized data centers. Furthermore, regulatory changes regarding energy consumption and carbon footprints will impact how the next generation of “gigawatt-scale” facilities are constructed. The ability to innovate in liquid cooling and renewable energy integration will become as important as the silicon itself.

Predictions suggest that the economic landscape will shift from a “compute-scarce” environment to a “compute-abundant” one as more custom silicon enters the market. This change could lead to a significant drop in the cost of intelligence, making AI integration a standard feature of every software application rather than a premium add-on. As these technological and economic shifts occur, the ability to iterate on custom chip designs will be the primary factor in maintaining a lead over other hyperscale cloud providers who remain more dependent on general-purpose hardware.

Strategic Takeaways for the Enterprise

The consolidation of AI compute represents a double-edged sword for businesses and professionals navigating this new reality. To manage this landscape effectively, stakeholders should consider the following strategic maneuvers:

- Evaluate Model Portability: Avoid becoming tethered to a single provider’s proprietary hardware ecosystem by seeking model architectures that can be migrated between different cloud environments.

- Focus on Inference Costs: When planning long-term projects, calculate the costs of running the model at scale for millions of users rather than just the initial training expenditures.

- Adopt a Architecture-Agnostic Approach: Rather than following industry trends blindly, companies should evaluate their specific needs—considering memory bandwidth and latency—to choose the most cost-effective infrastructure provider.

By applying these insights, organizations can leverage the power of hyperscale infrastructure without losing their competitive agility or falling victim to extreme vendor lock-in.

The Long-Term Significance of Compute Leadership

The investigation into compute leadership revealed that dominance in the AI sector was the result of a multi-year strategy centered on custom silicon and massive physical infrastructure. By reducing reliance on external vendors and dominating the data center market, a vertically integrated powerhouse was established that remained difficult for any competitor to match. The findings showed that the physical layer of AI—the actual chips and servers—acted as the ultimate gatekeeper for software innovation.

Strategic considerations for the future required a focus on efficiency rather than just raw capacity. Organizations that prioritized flexible architectures and inference optimization found themselves better positioned than those that focused solely on training scale. The shift toward specialized hardware designs underscored the necessity of moving away from general-purpose computing. Ultimately, the battle for the future was won by those who translated raw processing power into accessible, cost-effective solutions for the global market, ensuring that AI became a ubiquitous utility rather than a scarce luxury.