The digital payment landscape in 2026 demands a level of precision that traditional batch processing systems frequently struggle to provide amidst explosive transaction growth, especially when reconciling instantaneous authorizations with massive settlement files. While modern financial engineering teams initially turn to cloud-native, event-driven architectures for their inherent elasticity and low operational overhead, these systems often encounter a significant performance ceiling as transaction volumes scale beyond the capacity of standard serverless functions. This tension creates a critical architectural crossroads where the promise of “zero-ops” meets the reality of strict execution limits and database throughput bottlenecks. Scaling a reconciliation pipeline to handle over a million daily transactions requires more than just provisioning additional resources; it necessitates a fundamental shift toward a hybrid model that preserves the benefits of serverless while introducing the robustness of containerized compute to manage the heavy lifting of high-volume financial data processing.

Addressing Computational Limits and Database Bottlenecks

Overcoming the Execution Ceiling: The Timeout Trap

The primary technical hurdle encountered during the scaling process involves the rigid fifteen-minute execution window imposed by standard serverless compute environments like AWS Lambda. In a financial reconciliation context, processing involves parsing massive, fixed-width settlement files that contain hundreds of thousands of records, each requiring normalization and validation against internal ledgers. When a file exceeds a specific size threshold, the serverless function inevitably hits its runtime limit and terminates abruptly, leaving the task incomplete. This creates a dangerous “failure spiral” where the orchestration engine triggers a retry based on standard policies, but without granular checkpointing, the system simply restarts the same resource-intensive process from the beginning. Such a loop consumes significant compute cycles while failing to make any actual progress, leading to a growing backlog of unprocessed files that starves downstream services and risks breaching critical service level agreements.

Building on this foundational challenge, the lack of persistent state during long-running parsing tasks makes it nearly impossible to resume work from the point of failure within a standard serverless function. Every time a function times out, the work performed during those fifteen minutes is essentially discarded, leading to redundant database writes and unnecessary compute costs. This inefficiency is amplified during peak periods when multiple large files arrive simultaneously, creating a “thundering herd” effect that can overwhelm the orchestration layer itself. For engineering teams, this ceiling represents a hard limit on the viability of pure serverless models for batch-oriented financial tasks. The solution requires more than just optimizing code; it necessitates a rethink of how work is partitioned and which compute environment is best suited for the specific characteristics of the incoming data. Relying solely on short-lived functions for tasks that are inherently long-running is a recipe for systemic instability as business volume grows.

Mitigating Hot Partitions in NoSQL: Beyond Natural Keys

The second critical failure point emerges within the data storage layer, specifically regarding the distribution of write traffic in NoSQL databases like DynamoDB. Many initial architectures rely on “natural” partition keys, such as a combination of a program identifier and a specific date, to organize reconciliation records. While this seems logical for querying, it creates a massive imbalance in how data is physically distributed across the database cluster. When a single large program generates a sudden burst of several hundred thousand transactions for a single date, every single write request is directed toward a physical partition associated with that specific key. This results in “hot keys,” where the targeted partition reaches its throughput limit and begins throttling requests, even if the overall database table has significant unused provisioned capacity. The system essentially becomes a victim of its own organizational structure, unable to leverage the horizontal scaling capabilities of the cloud.

This bottleneck is further exacerbated when secondary indexes are introduced to support diverse query patterns needed for financial reporting and auditing. In high-volume environments, Global Secondary Indexes (GSIs) are maintained synchronously during the write path, meaning that any performance lag or partition skew within the index applies immediate back-pressure to the base table. If the index cannot distribute the incoming data as effectively as the primary table, it acts as a throttle that slows down the entire ingestion pipeline. Attempts to resolve this by simply increasing provisioned throughput often fail because the underlying issue is the uneven distribution of data rather than a lack of total resources. To achieve true scale, the architecture must move away from human-readable, natural partition keys in favor of a strategy that ensures data is spread uniformly across all available physical storage resources. This transition is essential for maintaining the high-speed write performance required for modern financial systems.

Implementing Hybrid Compute and Strategic Sharding

Routing Workloads via Hybrid Execution: The Best of Both Worlds

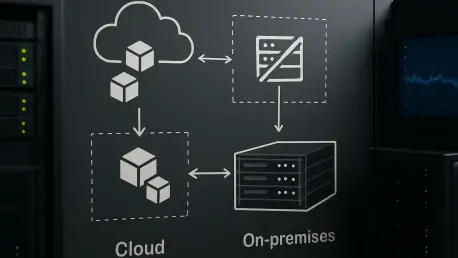

To effectively bypass the limitations of runtime ceilings, a sophisticated hybrid compute strategy utilizes a “Choice” state within the workflow orchestration to evaluate file metadata before processing begins. Small settlement files, which represent the majority of daily traffic, continue to be routed to serverless functions to take full advantage of their rapid cold-start times and granular cost-efficiency. However, when the system identifies a massive “outlier” file that exceeds a pre-defined record count threshold, it dynamically shifts the workload to a containerized environment, such as AWS ECS Fargate. This containerized layer provides a persistent compute space without the arbitrary fifteen-minute runtime restriction, allowing the system to perform continuous, heavy-duty parsing for hours if necessary. This dual-path approach ensures that the system remains responsive for standard tasks while possessing the raw endurance required to handle the most demanding batch processing jobs without interruption.

Maintaining this hybrid model effectively requires a high degree of code portability, often achieved by utilizing a single codebase, such as a Go-based microservice, that can operate seamlessly in both serverless and containerized environments. By packaging the business logic consistently, developers avoid the technical debt of maintaining separate implementations for different compute targets. Infrastructure as Code (IaC) templates are used to define the specific resource requirements for each path, allowing for precise tuning of CPU and memory for the containerized layer while keeping the serverless layer lean. This synergy allows the organization to optimize for both latency and throughput simultaneously. The hybrid model transforms the compute layer from a rigid constraint into a flexible asset that adjusts its behavior based on the specific characteristics of the data it is tasked to process, thereby ensuring that the reconciliation pipeline remains stable regardless of the incoming file size.

Solving Data Skew with Write Sharding: Achieving Horizontal Scale

The resolution of database bottlenecks is achieved through the implementation of deterministic write sharding, a technique that involves appending a calculated shard number to the partition key. Instead of using a static key like “ProgramID#Date,” the system calculates a hash of a unique transaction identifier and applies a modulo operation to assign it to one of many available shards. For example, by spreading data across sixty-four virtual shards, the system ensures that even a massive influx of data for a single program is distributed evenly across multiple physical partitions in the underlying database. This eliminates the “hot key” problem entirely, as no single partition is forced to handle the entire load of a specific batch. The database can then utilize its full horizontal scaling potential, allowing ingestion speeds to scale linearly with the number of shards defined in the system’s configuration.

Implementing this sharding logic requires a careful balance between write performance and read complexity, as the data is now intentionally fragmented to protect the stability of the ingestion path. The shard count is typically determined by analyzing the maximum expected peak traffic and the throughput limits of the physical hardware managed by the cloud provider. By moving to a synthetic, sharded partition key, the architecture gains the resilience needed to handle unpredictable spikes in transaction volume without the risk of throttling or data loss. This strategic shift moves the database from being a potential single point of failure to becoming a highly performant, distributed ledger. The use of deterministic hashing ensures that the distribution is consistent and repeatable, which is critical for maintaining data integrity in a financial context. This approach represents a mature evolution in NoSQL data modeling, where the physical requirements of the platform are prioritized to ensure systemic reliability at an enterprise scale.

Optimizing Data Retrieval and Operational Resilience

Managing the Read Path with Aggregates: The Map-Reduce Solution

While sharding successfully resolves write-side bottlenecks, it introduces a “scatter-gather” challenge for data retrieval, as a single query for a day’s reconciliation now requires scanning multiple shards. To maintain performance and control costs, the architecture adopts a localized aggregation strategy modeled after the traditional map-reduce paradigm. Instead of pulling millions of raw records into a central process to calculate totals, the system utilizes worker functions that perform localized summaries for each individual shard independently. These workers calculate specific metrics, such as transaction counts, total currency amounts, and error buckets, and store these small “shard summaries” back in the database. This decentralized approach ensures that the vast majority of the heavy lifting is performed in parallel, drastically reducing the time required to generate a comprehensive view of the day’s financial activity.

Once the localized shard summaries are complete, a final “reducer” task in the orchestration workflow gathers these small datasets and combines them into a single, global daily rollup. This strategy significantly reduces the amount of data transferred over the network and minimizes the read capacity units consumed during the reconciliation process. By shifting from a model that queries raw data to one that queries pre-calculated aggregates, the system achieves a massive reduction in latency, allowing financial controllers to access reconciliation reports in seconds rather than minutes. This optimization is particularly valuable during month-end closing periods when the demand for accurate, up-to-date financial data is at its highest. The aggregate-based read path demonstrates that a well-designed sharding strategy, when paired with intelligent summarization, can provide the best of both worlds: high-speed data ingestion and efficient, low-latency reporting for complex financial datasets.

Ensuring Integrity Through Idempotency and Monitoring: Practical Next Steps

Building a resilient reconciliation pipeline required more than just architectural changes; it demanded a commitment to rigorous operational standards that protected data integrity above all else. The engineering team implemented exponential backoff with jitter for all internal service communications, which prevented synchronized retry surges from overwhelming the infrastructure during intermittent failures. Furthermore, the adoption of strict idempotency keys ensured that any operation could be safely retried without the risk of duplicating financial entries or corrupting the ledger state. This was supported by conditional writes at the database level, which acted as a final safeguard against race conditions. For teams looking to implement similar systems, the first step is to audit all existing workflows for “at-least-once” delivery semantics and ensure that idempotency is built into the core logic rather than being treated as an afterthought.

Looking forward, the success of these high-volume systems depended on enhanced monitoring capabilities that went beyond basic success and failure metrics. The team began tracking “shard skew” and index latency in real-time to identify emerging hot spots before they caused systemic throttling. They also monitored the depth of Dead Letter Queues (DLQs) to distinguish between transient network errors and logic-based data quality issues. Organizations embarking on this journey should prioritize the development of custom observability dashboards that visualize the flow of data through the hybrid layers. By maintaining a single codebase and utilizing automated testing to simulate high-volume sharding scenarios, teams were able to ensure that the system remained adaptable to future growth. These practical measures transformed a fragile serverless experiment into a robust, enterprise-grade financial engine capable of supporting the next generation of global payment processing.