The relentless expansion of generative artificial intelligence has fundamentally altered the landscape of American infrastructure, shifting the primary industrial challenge from the production of silicon microchips to the procurement of massive quantities of reliable electrical energy. This pivot marks a historic transition in how the United States manages its power resources, as the digital economy no longer merely rests on top of the physical grid but actively competes with traditional industries and residential needs for every available megawatt. As specialized chips consume more power than ever before, the quest for sustained compute capacity has become an era-defining struggle for utility providers and tech giants alike.

The Surging Energy Requirements of Artificial Intelligence

Quantitative Forecasts: A Massive Shift in Power Use

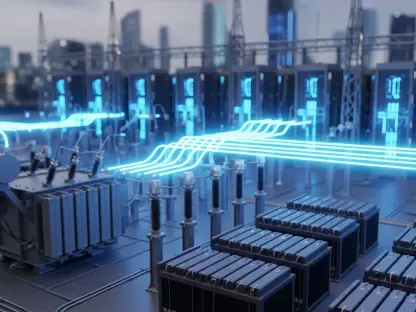

A profound transformation is occurring within the domestic energy landscape, where the portion of electricity dedicated to data centers is projected to skyrocket from a historical baseline of 4% to as much as 17% by 2030. This acceleration represents more than a simple increase in volume; it is a structural upheaval that is expected to drive total energy demand from 192 terawatt-hours to a potential peak of 790 terawatt-hours within the next four years. Such a trajectory suggests that the growth previously expected to occur over decades is now being compressed into a much narrower window, forcing a radical reassessment of how the nation generates and distributes power.

To understand the scale of this consumption, one must look at the individual footprint of modern AI facilities, which now rival the energy needs of mid-sized American cities. A single large-scale cluster can require enough electricity to power hundreds of thousands of homes, creating a localized demand shock that few regional grids were originally designed to handle. Analysts often look to various growth scenarios to navigate this uncertainty, ranging from low-growth models that assume current constraints will slow deployment to high-growth outlooks where nearly every proposed project reaches completion. Regardless of the specific model, the baseline expectation remains that the electricity demand for computation will continue to experience exponential rather than linear growth.

Real-World Applications: The Geographical Migration of Compute

The emergence of what experts call the “AI Wildcard” is largely driven by the extreme intensity of generative AI and real-time video processing, which often negate any efficiency gains made in hardware design. While newer chips are technically more efficient per calculation, the sheer volume of calculations required for modern large language models creates a net increase in total energy consumption. This dynamic has placed an immense strain on traditional tech hubs, particularly in Northern Virginia, where the concentration of data centers is so dense that these facilities may soon account for 60% of the entire state’s electrical load.

Consequently, the industry is witnessing a significant migration toward emerging hubs in the Midwest and the Mountain West, where land is abundant and permitting processes are often more streamlined. States like Arizona, Indiana, and Nebraska are becoming the new frontier for hyperscalers such as Google, Microsoft, and Meta, as they seek to diversify their physical footprints and find untapped pockets of grid capacity. These companies are no longer just building warehouses for servers; they are becoming major participants in grid planning, often negotiating directly with utilities to fund the construction of the very substations and transmission lines required to keep their hardware humming.

Industry Expert Perspectives on Infrastructure Strain

Insights from the Electric Power Research Institute suggest that the current expansion is creating a profound timeline mismatch between the tech and energy sectors. While a tech company can stand up a new data center in under two years, the utility infrastructure required to support it—such as high-voltage transmission lines or new baseload power plants—often takes a decade or longer to plan and build. This lag creates a bottleneck that threatens to stall the momentum of AI development unless radical new approaches to grid integration are adopted immediately.

Industry leaders have responded to these delays by increasingly adopting a “Bring Your Own Generation” strategy, where data centers function as self-contained energy islands. By installing onsite natural gas turbines, large-scale battery storage, or massive solar arrays, developers are attempting to bypass the slow process of grid interconnection. Furthermore, there is a growing push to move data centers from their traditional roles as passive energy consumers to active, flexible grid assets through initiatives like DCFlex. These programs aim to allow data centers to modulate their power usage in real time, shedding load during peak periods to support local grid reliability and prevent rolling blackouts for the general public.

Future Outlook: Opportunities and Systematic Challenges

The evolving energy mix faces a significant tension between the immediate need for reliable power and long-term carbon-free objectives. In the short term, the surge in demand is likely to extend the operational life of natural gas plants, as they provide the dispatchable “firm” power that data centers require to run around the clock. However, the long-term vision of hyperscalers remains tethered to sustainability, sparking a massive wave of investment in advanced nuclear technologies. Small Modular Reactors are gaining traction as a potential solution, offering the promise of high-density, carbon-free power that can be deployed closer to the data centers themselves.

Looking ahead, energy has effectively replaced silicon as the primary constraint in the global race for technological supremacy. The ability to secure large-scale power permits and reliable electricity delivery is now the most critical factor in determining where the next generation of AI will be built. This shift carries broader societal implications, as the competition for power could potentially drive up electricity costs for residential consumers or lead to grid instability if infrastructure modernization fails to keep pace. The coming years will likely be defined by a delicate balancing act between fostering innovation and ensuring that the electrical foundation of society remains both affordable and resilient.

Summary and Strategic Imperatives

The nexus between artificial intelligence and the electrical grid stood as a defining pillar of national economic strategy during this period of rapid expansion. It became clear that the availability of megawatts, rather than just the sophistication of software, dictated the speed of technological progress and the geography of industrial growth. This reality necessitated a new level of cooperation between regulators, utility providers, and technology developers, who were forced to align their disparate timelines to prevent a total stagnation of the digital economy.

The transition toward flexible, integrated data center operations proved to be the only viable path for sustaining the massive energy requirements of the AI era. Authorities eventually moved toward a model where large-scale compute facilities were treated as essential infrastructure, requiring dedicated energy planning and innovative supply solutions like advanced nuclear power. Ultimately, the successful management of this power demand determined which regions thrived as leaders of the new economy and which faced the risk of energy scarcity and infrastructure obsolescence.