The sudden collapse of a major financial transaction system or a sudden blackout in a global logistics hub often traces back to a single, preventable failure in a legacy network that was never designed for the sheer volume of today’s data-heavy environment. As of 2026, the digital backbone of most enterprises is under unprecedented strain, caught between the soaring demands of generative artificial intelligence and the increasingly sophisticated tactics of cybercriminals who exploit every micro-second of latency. Organizations that continue to rely on manual configuration and reactive troubleshooting are finding themselves at a severe competitive disadvantage, unable to provide the seamless, high-speed connectivity that modern applications require. The shift toward an AI-native network architecture has moved from a visionary concept to a survival requirement, offering a self-healing and predictive environment that can adapt to shifting workloads without constant human intervention. By integrating intelligence directly into the fabric of the hardware and software, businesses are finally able to close the gap between their ambitious digital goals and the physical realities of data transmission.

The Financial Risks and Infrastructure Gaps

Addressing Economic Realities: The High Cost of Stagnation

The price of maintaining the status quo in networking has reached a breaking point where the operational risks far outweigh the costs of comprehensive modernization. In the current landscape of 2026, a single cyber incident can easily drain $3.7 million from an organization’s reserves, but the invisible drain of unplanned downtime is even more insidious for daily operations. Reliable industry data indicates that network interruptions now cost enterprises approximately $9,000 for every single minute of service loss, which translates to over half a million dollars for a one-hour outage. This financial reality has fundamentally transformed how chief information officers view their infrastructure; it is no longer just a utility but a critical insurance policy against bankruptcy. For companies operating on thin margins, a few hours of connectivity failure can erase an entire quarter of profit, making the investment in a resilient, AI-native network a purely pragmatic decision based on fiscal preservation and long-term viability.

Furthermore, the return on investment for upgrading to an AI-native infrastructure is no longer measured solely by the absence of failure but by the dramatic increase in operational efficiency. Traditional networks require massive teams of engineers to manually manage tickets, configure ports, and hunt down the root cause of intermittent slowdowns that frustrate users. In contrast, modern systems utilize predictive analytics to resolve issues before they impact the end-user experience, effectively reclaiming thousands of man-hours that were previously wasted on routine maintenance. By 2026, the gap between the “leaders” who have modernized and the “laggards” who have not is becoming a chasm in terms of market valuation. Investors are increasingly looking at technical debt as a liability on the balance sheet, recognizing that a “Franken-network” of patched-together legacy parts represents a systemic risk that could lead to a catastrophic data breach or a total operational standstill at any given moment.

Legacy Limitations: Solving the Infrastructure Mismatch

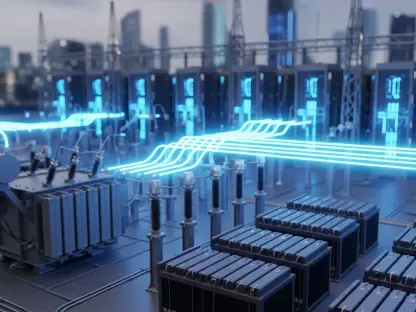

A significant portion of the corporate world is currently attempting to run high-performance, AI-driven software on what can only be described as the digital equivalent of a dirt road. This mismatch between modern application requirements and aging physical hardware creates a bottleneck that stifles innovation and prevents companies from realizing the full potential of their cloud investments. These legacy systems, often built through years of incremental upgrades and corporate mergers, lack the architectural flexibility needed to handle the massive, erratic data bursts characteristic of modern workloads. Without a unified view of the entire network, IT departments are essentially flying blind, unable to see how traffic flows across distributed environments or where security vulnerabilities might be hiding. The result is a fragile ecosystem where a single update in one department can cause unforeseen ripple effects across the entire global organization.

This “Franken-network” problem is particularly acute when businesses try to deploy edge computing or real-time processing tools that demand ultra-low latency. Because traditional networks were designed for a centralized, hub-and-spoke model, they struggle to support the decentralized nature of modern work where data is generated and consumed everywhere simultaneously. The lack of a cohesive, intelligent foundation means that data often takes inefficient paths, increasing the likelihood of packets being dropped or delayed. To overcome these hurdles, enterprises must transition to a simplified, software-defined architecture that treats the network as a single, programmable entity rather than a collection of disparate boxes. This shift allows for the creation of a dynamic environment where bandwidth can be reallocated instantly based on business priority, ensuring that critical applications always have the resources they need to function at peak performance levels.

Advanced Capabilities and Evolving Threats

Transitioning to Autonomous Defense: Proactive Security Measures

The evolution of enterprise networking is moving decisively away from simple automation and toward true autonomy, where the system itself possesses the intelligence to make real-time decisions. In the past, automation was limited to executing pre-defined scripts for repetitive tasks, but 2026 marks the era of the self-operating network that can detect, analyze, and remediate faults without human oversight. This shift is critical because the speed of modern business has surpassed the speed at which humans can type commands or respond to alerts. An AI-native network monitors millions of telemetry points simultaneously, identifying patterns of behavior that indicate a failing component or a misconfigured protocol. By the time an engineer would have normally been notified of an issue, the autonomous system has already redirected traffic to a healthy path and initiated a self-repair sequence, maintaining uptime and protecting the user experience.

One of the most powerful manifestations of this autonomy is dynamic network segmentation, which has revolutionized how sensitive data is protected within the enterprise. Historically, creating secure boundaries between different parts of a network was a labor-intensive process that took weeks of manual labor and was prone to human error. Today, AI-driven systems analyze user behavior and threat signals in real-time, allowing security perimeters to adapt instantly to the current risk environment. If a particular device begins to exhibit suspicious activity, the network can automatically isolate it from the rest of the infrastructure, preventing the lateral movement of a potential threat. This “moving target defense” makes it significantly harder for attackers to find a foothold, as the internal architecture of the network is constantly shifting to minimize the attack surface while ensuring that legitimate business processes continue.

Quantum Readiness: Defending Against Emerging Digital Frontiers

As enterprises deploy increasingly powerful agentic AI models, they are inadvertently opening new doors for sophisticated cyberattacks that legacy security frameworks are simply not equipped to handle. These autonomous AI agents, while highly productive, can be manipulated through techniques like prompt injection or data poisoning, where malicious actors corrupt the underlying logic of the AI to steal proprietary information. A modern network must be able to recognize the subtle signs of these attacks, monitoring the interaction between AI agents and the data they access to ensure that security protocols are never bypassed. This requires a level of deep inspection and behavioral analysis that is only possible with a native AI core that understands the context of the traffic it is carrying, rather than just looking at the source and destination of data packets.

Furthermore, the industry is now forced to confront the looming threat of the “Quantum Shadow,” a term used to describe the risk posed by future quantum computers to current encryption standards. Cybercriminals are already engaged in “harvest now, decrypt later” strategies, stealing encrypted enterprise data today with the intention of unlocking it once quantum tools become more widely available over the next several years. To counter this, forward-thinking organizations are prioritizing “crypto-agility,” which is the ability of a network to update its encryption algorithms rapidly as new standards emerge. An AI-native infrastructure allows for the seamless deployment of quantum-resistant cryptography across the entire environment without requiring a total hardware overhaul. This ensures that long-term data assets remain secure against future technological leaps, providing a level of future-proofing that is essential for maintaining trust.

Architectural Foundations for the Future

Leveraging Observability: Deep Telemetry for Precise Management

The transition from traditional monitoring to deep observability represents a fundamental shift in how IT teams maintain the health and performance of their digital environments. While standard monitoring tools can tell an administrator that a specific link is down or a server is overloaded, observability provides the context needed to understand exactly why the failure occurred in the first place. By collecting and analyzing massive amounts of telemetry data from every corner of the network, AI-native systems can create a digital twin of the infrastructure, allowing engineers to run simulations and predict the impact of changes before they are implemented. This level of insight is crucial for validating the performance of AI workloads, which are often unpredictable and can create unusual traffic patterns that would baffle conventional management tools.

In the complex, hybrid-cloud world of 2026, observability acts as the “source of truth” that bridges the gap between on-premises hardware and remote cloud services. It allows for the tracking of a single data packet as it travels across various service providers, private data centers, and edge locations, identifying exactly where latency is being introduced or where security policies are being ignored. This end-to-end visibility eliminates the “blame game” between different technology vendors and internal departments, as the data clearly points to the source of any bottleneck. For the business, this means faster resolution times and a more consistent experience for employees and customers alike. By turning raw data into actionable intelligence, observability empowers leadership to make informed decisions about capacity planning and technology investments based on hard evidence rather than mere intuition.

Unified Management Models: Integrating Security and Operations

The final piece of the modern networking puzzle involves the convergence of network and security operations into unified management models such as Secure Access Service Edge and Zero Trust Network Access. For too long, these two departments have operated in silos, using different tools and following different priorities, which often led to gaps in protection and inefficiencies in performance. By merging these functions into a single command hub, organizations can enforce a consistent security policy regardless of where a user is located or what device they are using to access the network. This unified approach allows for the creation of automated response playbooks that can neutralize threats at machine speed, ensuring that the network’s defense mechanism is just as fast and agile as the attacks it is designed to prevent.

As this transformation unfolded, the success of these AI-native networks ultimately rested on the synergy between advanced technology and human expertise. While the infrastructure provided the autonomous tools and deep visibility required for modern operations, skilled professionals remained essential for governing the distributed environment and setting the strategic direction. Organizations that invested in their people alongside their hardware were able to build a resilient foundation that supported rapid innovation and protected against the most sophisticated threats. By embracing this combination of intelligent hardware and expert oversight, businesses secured their place in a global economy that demanded constant connectivity and absolute data integrity. This strategic alignment between human talent and autonomous systems proved to be the most effective defense against the uncertainties of a rapidly evolving digital landscape.