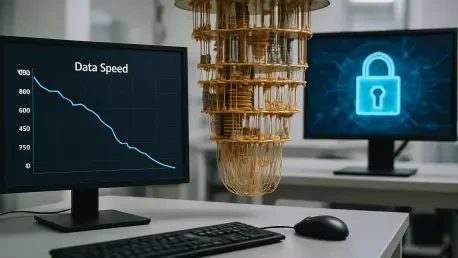

The rapid evolution of computational power has reached a critical threshold where the theoretical threats of yesterday are becoming the urgent security mandates of today. Solana, which built its formidable reputation on unparalleled transaction speeds and low-latency throughput, now finds itself at the forefront of a necessary but painful transition into the post-quantum era. In a recent collaboration with Project Eleven, the Solana Foundation and Solana Labs conducted intensive security stress tests to evaluate the network resilience against quantum-based decryption attempts. The findings reveal a stark reality: while the new cryptographic signatures provide robust protection against future quantum processors, they also trigger a staggering ninety percent reduction in the overall transaction processing capacity of the network. This performance hit stems from the sheer complexity of quantum-resistant algorithms, which demand significantly more resources than the current industry-standard protocols used across most modern blockchain environments.

The Technical Reality: Quantum Resistance Challenges

Scaling the Wall: Post-Quantum Cryptography

The primary obstacle identified during these intensive simulations revolves around the physical size of the data packets required to ensure quantum safety. Standard Ed25519 signatures, which have long served as the bedrock of Solana efficient architecture, are incredibly compact and easy for nodes to propagate across the network rapidly. In contrast, the post-quantum cryptographic signatures currently under evaluation are between twenty and forty times larger, creating a massive influx of data that saturates available bandwidth almost instantly. This expansion means that every transaction now carries a heavy payload that dwarfs the original transaction data itself, leading to severe congestion at the validator level. When a network is optimized for lean, fast data transmission, such a drastic increase in packet size disrupts the fundamental mechanisms of the consensus protocol. Consequently, the high throughput that users have come to expect is sacrificed to maintain a level of encryption that can withstand futuristic decryption methods.

Beyond the mere consumption of bandwidth, the increased signature size imposes a substantial computational burden on the individual hardware components that power the network nodes. Validators must dedicate significantly more CPU cycles to verify these complex mathematical proofs, which directly increases the latency of block production and finality. During the recent simulation phase, it became clear that the current generation of validator hardware might struggle to keep pace with the rigorous demands of a fully post-quantum environment without significant upgrades. The higher operational costs associated with processing these heavy cryptographic loads could lead to a shift in the economic structure of the network, potentially raising entry barriers for smaller participants. Developers are now tasked with the difficult challenge of re-engineering the signature verification pipeline to handle these payloads more efficiently. If the network cannot adapt its internal logic to process these larger signatures in parallel, the drop in performance will remain a persistent barrier.

Addressing the Vulnerabilities: Public Key Visibility

A specific architectural nuance makes Solana particularly susceptible to the looming quantum threat compared to some of its contemporary peers. In the current ecosystem, public keys are often observable on the network, providing a clear target for quantum algorithms like Shor’s algorithm, which can derive a private key from its public counterpart with alarming efficiency. This transparency, while beneficial for account management and speed in a classical computing environment, becomes a major liability when facing adversaries with quantum capabilities. The ongoing security tests highlight that simply hiding keys is not a sufficient defense; rather, the entire method of account interaction must be overhauled to ensure that assets remain secure even if a public key is exposed. Proactive defense requires a fundamental shift in how the ledger records and verifies ownership, moving toward a model where the underlying cryptography is inherently resistant to the specific types of mathematical factoring that quantum machines excel at performing.

The necessity of these upgrades is underscored by the fact that once a quantum computer reaches a certain level of qubit stability, existing assets could be compromised in a matter of seconds. By identifying these vulnerabilities in 2026, the development community is attempting to stay ahead of a curve that many other networks have yet to acknowledge fully. However, the trade-off remains the central point of contention among stakeholders who prioritize speed above all else. The tests showed that while the security protocols successfully blocked simulated quantum attacks, the user experience suffered as transaction confirmations slowed from milliseconds to several seconds. This creates a strategic dilemma for the foundation: how to maintain the competitive advantage of high speed while building a fortress that can survive the next century of computing. The research team is currently analyzing the data to determine if specific types of transactions can be prioritized for different levels of security to mitigate the overall impact on the network throughput.

Navigating the Path: Optimization and Resilience

Strategic Tools: Winternitz Vaults and Hybrid Systems

To bridge the gap between high performance and robust security, developers are exploring several innovative workarounds that do not require a total network overhaul. One of the most promising strategies involves the implementation of Winternitz Vaults, which allow for quantum protection at the individual wallet level. This approach enables high-value users or institutions to opt into a more secure but slower signature scheme without forcing the entire network to adopt the heavy data payloads associated with global post-quantum standards. By isolating the security upgrades to specific vaults, the network can maintain its general speed for everyday micro-transactions while offering a “gold standard” of protection for significant asset holdings. This tiered security model provides a flexible path forward, allowing the ecosystem to evolve at multiple speeds depending on the specific needs and risk profiles of different user segments and decentralized applications.

Another experimental approach involves the use of hybrid signing, which blends traditional Ed25519 signatures with newer, quantum-resistant ones to maintain backward compatibility. This dual-layered system ensures that the network remains functional for existing software while gradually introducing the security layers necessary for future-proofing. During the simulations, hybrid signing showed a more moderate impact on latency than pure quantum-resistant protocols, though it still required substantial optimization to prevent validator bottlenecks. The goal of this hybrid strategy is to create a transition period where the network can test the stability of new cryptographic primitives without risking a complete shutdown or a mass migration of assets. Developers are also working on “native verifiers,” which are specialized software tools designed to process these heavy cryptographic loads more efficiently within the core architecture. These verifiers aim to offload the heaviest mathematical tasks to optimized hardware paths, potentially recovering some of the lost speed.

Strategic Implementation: Long-Term Network Health

The consensus among industry experts is that preparing for the quantum transition is a marathon that requires a decade-long perspective rather than a quick fix. Since major network upgrades of this complexity could take between 2026 and 2030 to fully implement and stabilize, starting these rigorous tests in the current environment was considered a vital strategic move. The results achieved so far have served as a wake-up call for the entire blockchain industry, demonstrating that the theoretical “quantum apocalypse” has tangible consequences for network performance today. While the ninety percent speed drop was a sobering discovery, it provided the necessary data to begin the next phase of optimization. Engineers have already begun drafting new specifications for validator hardware that could mitigate some of the computational overhead. This forward-thinking approach ensures that the community is not caught off guard when quantum hardware becomes more accessible to malicious actors or competing national interests.

Ultimately, the ability of the network to remain competitive depended on whether it could integrate these heavy security layers without sacrificing the core scalability that defined its value proposition. The development teams concluded that the initial simulation results were a baseline for improvement rather than a final verdict on the future of the platform. Actionable next steps included the refinement of the Winternitz Vault protocols and the expansion of the hybrid signing pilot program to a wider range of testnet participants. By focusing on modular security updates, the foundation aimed to provide a roadmap that balanced the immediate need for high transaction volume with the long-term requirement for cryptographic resilience. The transition to a post-quantum state was viewed not as an end to high-speed blockchain, but as a catalyst for a new era of architectural innovation. Stakeholders were encouraged to begin evaluating their own security needs and to prepare for a multi-year migration process that prioritized the safety of the global digital economy.