Modern industrial sites and retail outlets are currently generating vast quantities of raw data that would overwhelm traditional wide-area networks if every byte were sent to a central cloud for processing. This surge in localized data production has created a critical bottleneck for enterprises attempting to implement real-time automation and sophisticated machine learning at the point of origin. To address this challenge, Hewlett Packard Enterprise has introduced a new generation of ProLiant systems specifically engineered to handle high-performance computing tasks in environments that are often physically demanding and geographically isolated. By shifting the computational heavy lifting from distant data centers to the immediate proximity of sensors and users, these systems allow for instantaneous decision-making that is vital for modern logistics, manufacturing, and autonomous operations. This strategic pivot reflects a broader industry movement where the physical location of a server is becoming just as important as the silicon housed within it.

Architectural Foundations for Edge Computing

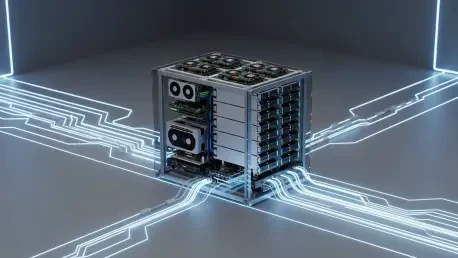

Versatility Through the EL2000 Chassis

The technical foundation of this new hardware rollout is the ProLiant Compute EL2000 chassis, a ruggedized enclosure designed to provide a flexible and resilient home for high-density compute nodes. This chassis acts as a standardized platform that can be deployed in diverse settings, from the backrooms of retail stores to the vibration-heavy environments of factory floors. Within this versatile frame, the EL220 server node offers a streamlined solution for organizations that need to maximize their compute density without expanding their physical footprint. By allowing two EL220 nodes to occupy the space of a single chassis, enterprises can effectively double their local processing power. This density is particularly beneficial for high-traffic environments where space is at a premium, enabling the simultaneous management of multiple localized workloads, such as inventory tracking and real-time customer analytics, without requiring a massive hardware overhaul.

Building on the flexibility of the EL2000 chassis, the EL240 node provides a more robust option for tasks that demand significant graphical processing and storage capacity. Unlike its more compact sibling, the EL240 is designed to house specialized accelerators, such as Nvidia RTX Pro 4500 and 6000 GPUs, which are essential for running complex artificial intelligence models at the edge. This capability allows for the execution of advanced computer vision and spatial intelligence applications directly on-site, reducing the need to transmit high-resolution video streams across the internet. The inclusion of additional storage options further ensures that critical data can be cached and analyzed locally, providing a buffer against network instability. Consequently, the combination of the EL2000 chassis and its specialized nodes creates a modular ecosystem that can be tailored to the specific thermal, spatial, and computational requirements of any distributed enterprise location.

Resilience in Challenging Physical Environments

For environments that exceed the operational limits of standard hardware, the enhanced ProLiant DL145 Gen11 represents a significant leap forward in ruggedized server design. This 2U system is specifically built to withstand extreme temperatures reaching as high as 55 degrees Celsius, making it suitable for deployments in non-climate-controlled settings like desert oil rigs or hot warehouse mezzanines. Powered by the AMD EPYC 8005 series processor, the DL145 Gen11 delivers high-performance multi-core processing while maintaining the energy efficiency necessary for locations with limited power budgets. This balance of power and durability ensures that critical applications remain online even when the surrounding environment is hostile to traditional electronics. The ruggedized nature of this server is not merely about physical survival; it is about providing a reliable execution environment for the software that keeps modern industrial operations running smoothly.

Beyond its physical toughness, the DL145 Gen11 includes specialized features designed to support isolated or air-gapped operations, which are increasingly common in sensitive industries. With integrated support for Azure Local Disconnected Operations, the system can function as a private cloud node that remains fully operational even when severed from a primary internet connection. This is a vital feature for defense contractors, medical facilities, or remote research stations that must maintain strict data sovereignty and operational continuity. By providing a bridge between high-performance local compute and hybrid cloud management, the system ensures that intelligence is never lost due to a lack of connectivity. This approach to hardware design acknowledges that the edge is not just a place where data is collected, but a frontier where the most critical and time-sensitive enterprise decisions are being made daily.

Management and Scaling of Distributed Intelligence

Orchestrating Global Fleets via the Cloud

The physical deployment of hardware at the edge is only half of the challenge; managing thousands of individual servers across a global footprint requires a sophisticated and centralized software layer. HPE utilizes its Integrated Lights-Out technology to provide a secure, hardware-level management interface that functions independently of the operating system. This allows IT administrators to perform low-level maintenance, firmware updates, and security monitoring from a central office, regardless of where the physical server is located. When combined with the HPE Compute Ops Management platform, these individual units are transformed into a unified global fleet. This cloud-native management approach eliminates the need for expensive, on-site technical staff at every retail branch or factory, as the entire infrastructure can be monitored and regulated through a single, intuitive dashboard that provides real-time visibility into the health and performance of the edge.

This centralized management strategy also addresses the growing security concerns associated with placing high-value computing assets in non-secure locations. By leveraging hardware-rooted security features, the management platform can detect and respond to unauthorized physical or digital tampering immediately. The software provides a consistent security posture across the entire distributed network, ensuring that a server in a remote warehouse is just as protected as one in a tier-one data center. Furthermore, the ability to automate routine updates and patches across thousands of nodes simultaneously reduces the risk of human error and ensures that all edge devices are running the latest, most secure software. This level of orchestration is what allows enterprises to scale their AI initiatives from a single pilot project to a massive, worldwide deployment without becoming bogged down in the logistical nightmare of manual hardware management.

Enabling the Era of Spatial Intelligence

The move toward distributed AI is driven by a fundamental shift in how businesses interact with the physical world, moving away from reactive data logging toward proactive spatial intelligence. Companies in sectors like retail and logistics are now using these edge systems to power real-time automated systems that can track inventory movements, optimize floor layouts, and even manage autonomous robotic fleets. For instance, a large grocery chain can use the local compute power of ProLiant systems to analyze video feeds for shelf-stocking needs or to manage automated checkout systems without the latency of a cloud round-trip. This immediate processing capability is the key to creating a truly responsive business environment where technology anticipates needs before they become problems. The focus is no longer just on collecting data for later analysis, but on using that data to drive immediate, automated actions that improve efficiency and the customer experience.

As organizations continue to integrate these advanced AI capabilities into their daily operations, the distinction between digital insights and physical actions will continue to blur. The deployment of purpose-built edge hardware signifies a departure from the practice of simply retrofitting standard data center servers for use in the field. Instead, these new systems are designed from the ground up to be the brains of the modern, distributed enterprise. By providing the necessary thermal management, physical durability, and computational density, these platforms enable a new class of applications that were previously impossible due to technical constraints. The transition to distributed AI represents a maturing of the technology landscape, where intelligence is no longer confined to a central hub but is woven into the very fabric of the physical sites where business is conducted, creating a more resilient and agile corporate infrastructure.

Strategies for Edge Modernization

Organizations looking to capitalize on these advancements should begin by auditing their current data flows to identify which processes are most hindered by latency or high bandwidth costs. It was essential for early adopters to recognize that not every workload belongs at the edge; rather, the focus was placed on those tasks where real-time response or localized data sovereignty provided a clear competitive advantage. By starting with a targeted pilot program in a single facility or region, businesses were able to refine their management protocols and demonstrate the return on investment before committing to a full-scale global rollout. This incremental approach allowed IT teams to gain familiarity with cloud-based management tools and ensured that the physical infrastructure was properly configured for its specific environmental challenges.

The integration of ruggedized hardware and centralized management software served as a catalyst for a more autonomous and efficient enterprise model. Moving forward, the most successful implementations will likely be those that treat edge computing not as a separate silo, but as a seamless extension of their broader hybrid cloud strategy. This required a shift in mindset from traditional, centralized IT management to a more decentralized, yet highly orchestrated, operational philosophy. By prioritizing systems that offer both physical resilience and sophisticated remote visibility, leaders were able to build a foundation that supports the next decade of innovation in automation and artificial intelligence. The goal for any modern enterprise is to ensure that their compute capabilities are as mobile and adaptable as the business itself, turning every physical location into a potential source of real-time intelligence and growth.