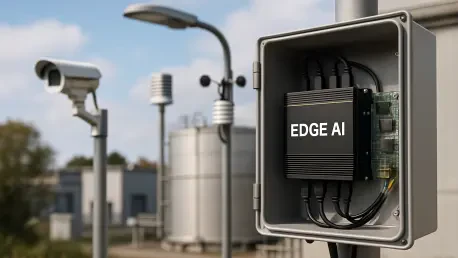

A massive surge in the deployment of interconnected sensors and autonomous actuators has forced a complete reimagining of the traditional cloud-based processing architectures that once dominated the global technology landscape. For a long duration, the Internet of Things functioned through a simple hub-and-spoke model where every byte of raw data traveled thousands of miles to a centralized data center for analysis. While this centralized strategy worked during the early stages of connectivity, the explosive growth of high-bandwidth data streams like high-definition video and high-frequency vibration telemetry created unsustainable bottlenecks. Moving the computational brain directly to the edge of the network has solved these issues by enabling localized intelligence. Instead of acting as passive conduits for information, modern devices now possess the innate ability to filter, interpret, and respond to their environments instantly. This shift toward localized processing represents a structural evolution that allows for a new class of mission-critical tasks.

The Technical Infrastructure of On-Device Processing

To facilitate this transition, engineering focus has shifted from simple data transmission toward the sophisticated requirements of local inference. While the initial training of machine learning models remains a resource-heavy process that often takes place in centralized clusters, the execution of these models now happens on the device level. This necessitates advanced optimization techniques to ensure that complex neural networks can operate within the strict constraints of edge hardware. Developers employ strategies such as quantization to reduce numerical precision and pruning to remove redundant connections within an algorithm. These methods allow highly effective models to run on tiny microcontrollers without compromising the accuracy needed for complex pattern recognition. By shrinking the footprint of these AI models, organizations have successfully enabled high-level intelligence on hardware that was previously considered too limited for such computationally intensive workloads.

The physical evolution of semiconductor technology has played a fundamental role in supporting these software advancements. General-purpose processors are increasingly being supplemented or replaced by specialized silicon, such as Neural Processing Units and Vision Processing Units, which are designed specifically to handle the mathematical heavy lifting of AI inference with extreme efficiency. This hardware specialization allows for a hybrid orchestration model where the edge and the cloud work in a symbiotic relationship. Local devices handle hot data that requires immediate action, while the cloud remains a repository for cold data used for long-term storage and retraining the next generation of algorithms. This distributed architecture ensures that the network is never overwhelmed by raw, unrefined data. Consequently, the reliance on massive data centers is shifting toward a more agile, decentralized framework that prioritizes efficiency and responsiveness at the source of data generation.

Strategic Advantages of Decentralized Computation

The most immediate benefit of shifting intelligence to the edge is a dramatic reduction in latency, which is a critical requirement for time-sensitive operations. In high-stakes environments like autonomous vehicle navigation or high-speed robotic manufacturing, even a few milliseconds of delay caused by cloud communication can result in catastrophic system failure. By processing sensor data locally, these systems achieve sub-millisecond response times, allowing for instantaneous adjustments to environmental changes. This operational fluidness ensures a level of safety and reliability that cloud-dependent systems simply cannot match, particularly in sectors where human lives or expensive assets are at risk. The ability to make split-second decisions without waiting for a distant server to respond has become the new standard for industrial efficiency. This localized approach effectively removes the physical limitations of the speed of light that once constrained centralized systems.

Beyond the obvious speed improvements, localized intelligence offers significant advantages in the realms of data privacy and cost management. Keeping sensitive information on the device reduces the overall attack surface available to cybercriminals and simplifies compliance with increasingly strict global data residency regulations. Furthermore, the economic benefits of this model are substantial, as transmitting raw high-definition video or massive sensor logs to the cloud is prohibitively expensive. By performing the primary analysis on the device and only sending relevant metadata or critical alerts to central servers, organizations can reduce their bandwidth consumption by over ninety percent in many cases. This optimization allows companies to scale their IoT deployments significantly without facing exponential increases in networking costs. The combination of enhanced security and lower operational overhead makes the move to edge computing a logical progression for any data-driven enterprise looking to improve its bottom line.

Sector-Specific Implementations of Local Intelligence

Industrial environments have become the primary testing ground for the transformative power of localized intelligence through predictive maintenance. Sensors attached to heavy machinery analyze sound frequencies and vibration patterns in real time to detect microscopic signs of wear that would be invisible to human inspectors. This allows maintenance teams to fix a component before it fails, preventing costly downtime and extending the lifespan of the equipment. Similarly, in the context of smart city infrastructure, edge-enabled cameras process traffic flow and detect accidents locally rather than streaming constant video to a central hub. This localized processing addresses major privacy concerns while still providing the rapid data needed for emergency response coordination and traffic light optimization. The shift has turned cities from reactive entities into proactive systems that can manage complex urban dynamics with unprecedented precision and minimal human intervention at the monitoring stage.

The impact of this technology is equally profound in the healthcare and logistics sectors, where real-time monitoring can be a matter of life or death. Wearable medical devices now use localized algorithms to monitor cardiac rhythms or glucose levels, providing immediate feedback to the patient or alerting emergency services the moment an anomaly occurs. Because these devices do not rely on a constant internet connection to perform their primary function, they offer a reliable safety net even in remote areas. In the world of logistics, smart sensors in shipping containers monitor the integrity of fragile or temperature-sensitive cargo throughout the supply chain. These devices can detect if a cold-chain shipment has exceeded a safe threshold or if a package has experienced a high-impact shock, creating an immediate and undeniable record of the event. This level of granular visibility ensures that quality control is maintained from the factory floor to the final destination, regardless of the quality of the network.

Navigating the Obstacles to Seamless Scaling

Despite the clear advantages, the implementation of localized intelligence is not without significant technical hurdles, primarily involving a delicate balance of power and performance. Developers must navigate a complex triangle of trade-offs where increasing computational performance often leads to higher power consumption and increased hardware costs. For battery-powered sensors, running a continuous AI model can quickly drain the energy source, necessitating the use of extremely lean code and sophisticated power-management routines. Engineers are forced to find the exact point where a model is small enough to run efficiently but large enough to provide reliable results. This requirement for extreme optimization adds a layer of complexity to the development cycle that does not exist in the relatively infinite resource environment of the cloud. Managing these hardware constraints remains one of the most challenging aspects of modern edge software design and implementation.

Furthermore, the operational complexity of managing a distributed fleet of thousands of individual devices creates new administrative challenges for IT departments. Unlike a centralized data center where updates can be managed in one place, an edge ecosystem requires robust operations pipelines to push model updates and monitor for performance degradation across diverse hardware platforms. Security also remains a major concern, as edge devices are often located in public or remote areas where they are vulnerable to physical tampering. If a device is stolen, an attacker could potentially gain access to the localized models or the broader corporate network through the hardware interface. Additionally, a lack of universal standards for edge software can lead to vendor lock-in, making it difficult for organizations to integrate new technologies or scale their operations across different manufacturers. Solving these issues requires a holistic approach that combines secure hardware design with open software standards.

Emerging Trends and Next Steps for Industry Leaders

The future development of this field is being shaped by emerging techniques like federated learning, which allows for collaborative model training without ever moving raw data from the device. In this model, individual edge nodes learn from their local environment and share only the refined insights or parameter updates with a central server. This allows a global model to become smarter over time while ensuring that the private data of the user remains strictly on their local hardware. Simultaneously, the rise of specialized technology for the smallest sensors is pushing intelligence into the very fabric of our surroundings. These low-power solutions enable AI to function on microwatts of energy, bringing smart capabilities to everyday items like light switches and basic environmental probes. The ongoing rollout of high-speed 5G and 6G connectivity further accelerates this trend by providing the low-latency backbone required for these edge nodes to communicate with each other seamlessly and efficiently.

Strategic planning for the adoption of these technologies proved to be a decisive factor for industrial competitiveness in recent cycles. Stakeholders moved away from generic cloud-only strategies and invested heavily in specialized hardware that supported localized inference at the point of data origin. This shift allowed for the creation of more resilient and responsive systems that functioned independently of network stability. Organizations that prioritized the development of lean, optimized models were able to deploy intelligence in environments that were previously unreachable by traditional computing. The transition toward a distributed internet of intelligence was characterized by a focus on reducing bandwidth reliance and enhancing data sovereignty through local processing. By integrating these localized capabilities, leaders successfully mitigated the risks associated with centralized bottlenecks and paved the way for a more robust technological ecosystem. The lessons learned during this period established a framework for continuous improvement in autonomous system design and deployment strategies.