Matilda Bailey is a distinguished networking specialist whose expertise lies at the intersection of high-speed data center architectures and next-generation optical solutions. With years of experience monitoring the evolution of cellular and wireless technologies, she has become a leading voice on how artificial intelligence is fundamentally reshaping physical infrastructure. As hyperscale environments move away from traditional telecom models toward massive, GPU-centric clusters, her insights provide a roadmap for the “opticalization” of the modern data center.

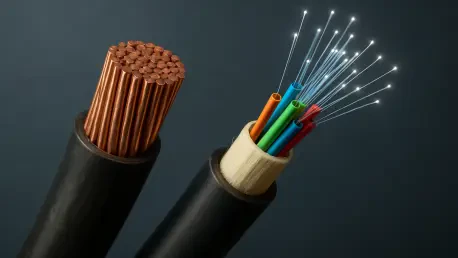

In this conversation, we explore the rapid transition from copper to fiber, the technical milestones required to reach 3.2T throughputs, and the shifting debate between co-packaged and pluggable optics. We also examine the critical innovations in energy efficiency, such as liquid-cooled modules and thermal energy harvesting, that are necessary to sustain the unprecedented power demands of AI backend fabrics.

As AI workloads shift the focus from individual chips to entire computing systems, how is the transition from copper to optical connectivity changing physical rack density? Furthermore, what specific performance benchmarks are currently driving this “opticalization” of the network infrastructure?

The shift is dramatic because we are no longer just looking at how fast a single chip can process data, but how effectively we can connect thousands of them into a singular, cohesive computing engine. As clusters get larger and racks become significantly denser to accommodate more GPUs, traditional copper cabling simply cannot keep up with the reach and signal integrity required at these scales. This “opticalization” is driven by a need for reliable, high-performance connectivity that can handle the massive data throughput of AI backend fabrics. Specifically, the industry is benchmarking success against the ability to support 102.4T switching capacity in a compact 1RU system, a feat that is only possible by doubling lane rates and moving toward optical interconnects. We are seeing a “first-order challenge” where the speed and scale of these AI data centers exceed traditional telecom orientations by at least an order of magnitude.

With the AI optics market projected to surpass $20 billion by 2030, how are you preparing for the rapid transition from 800G to 1.6T and 3.2T throughputs? What technical hurdles must be cleared to implement 400G-per-lane architectures effectively, and what does the step-by-step deployment timeline look like?

Preparing for this growth involves an aggressive acceleration of our technology roadmaps, specifically focusing on the shift from 200G-per-lane to 400G-per-lane architectures. The primary technical hurdle is developing optical DSPs, like the Taurus platform, that can reliably manage 400G per lane to enable the next generation of 1.6T and eventually 3.2T transceiver modules. Our deployment timeline is quite compressed; we expect 1.6T to surpass 800G as the primary port speed in new AI fabrics as early as 2027. Over the next five years, we anticipate shipping more than 100 million units of these high-speed transceivers, with nearly half of them utilizing 400G optics. This transition is essential because doubling the lane rate is the most proven strategy we have to keep pace with the exponential growth of AI cluster bandwidth.

While co-packaged optics (CPO) are gaining traction for future large GPU configurations, many still favor pluggable copper or linear optics. In which specific use cases does CPO become mandatory versus optional, and how do these choices impact the long-term flexibility and cost of backend fabrics?

Co-packaged optics move from optional to mandatory when we look at the most massive scale-up domains, such as the upcoming Feynman racks due in 2028, where the density requirements simply outstrip what passive copper can provide. For hyperscalers building around platforms like Spectrum X, CPO offers a way to integrate connectivity directly with the GPU, reducing power consumption and physical footprint. However, for many other use cases, pluggable copper, microLEDs, and linear optics remain highly attractive because they offer superior flexibility and lower initial costs for systems that haven’t yet reached those extreme density limits. The choice impacts the long-term fabric by forcing a trade-off: CPO provides the ultimate performance ceiling for the largest systems, while pluggable solutions allow operators to upgrade individual components more easily as technology evolves. We expect a broad mix of these solutions to coexist over the next five years, depending entirely on the specific scale and use case of the deployment.

Energy efficiency is a primary hurdle as clusters get larger, leading to innovations like liquid-cooled optics and thermal energy harvesting. How do these cooling technologies integrate into existing hyperscale environments, and what operational steps are necessary to manage high-performance modules consuming upwards of 400W?

Integrating these technologies requires a fundamental rethink of data center cooling, moving away from traditional air-cooling toward sophisticated liquid-cooled cold plates integrated directly into the optics modules. For instance, we are seeing the emergence of eXtra-dense Pluggable Optics (XPO) that use 64 electrical lanes and can support modules consuming over 400W through these liquid-cooled interfaces. Operationally, this means data centers must implement more robust fluid management systems and multi-source agreements to ensure a steady supply of compatible high-power modules from various vendors. Additionally, we are looking at harvesting thermal energy—essentially converting the waste heat generated by these high-performance systems back into usable electrical power. This helps mitigate the massive energy requirements that AI infrastructure places on enterprise and hyperscale organizations, making the overall system more sustainable.

Multi-rail open line systems are now integrating optical components into single line cards to boost density for neocloud and enterprise AI. What are the integration challenges when combining multiple fiber rails, and how does this streamlined architecture directly address the power constraints of modern data centers?

The main challenge in combining multiple fiber rails onto a single line card is maintaining signal integrity while significantly increasing the density of optical components in a very tight physical space. By consolidating these elements into a streamlined architecture, like the Open Transport 3000 Series, we can remove redundant hardware and reduce the overall power footprint of the interconnect system. This approach is particularly effective for neocloud and enterprise AI applications because it allows for high-density scaling without a linear increase in power consumption or rack space. Essentially, it simplifies the optical backbone, making it easier to manage the massive scale-out domains required for modern AI workloads while staying within the strict power budgets of current data center facilities.

What is your forecast for optical connectivity in AI data centers?

I forecast that by 2030, the AI optics market will become the dominant force in data center networking, surpassing $20 billion in annual value as the “optical imperative” takes hold across all levels of the stack. We will see a decisive shift where 1.6T and 3.2T modules become the standard for backend fabrics, and the transition to 400G-per-lane technology will be the primary driver for achieving 204.8T switching capacities. While the debate between co-packaged and pluggable optics will continue, the sheer volume of data will make optical connectivity a non-negotiable requirement for any organization looking to scale AI. Ultimately, the success of the next generation of computing will depend less on the chips themselves and more on our ability to build an energy-efficient, high-speed optical web that can bind them together into a single, massive intelligence engine.